AI Coding Assistants Compared: Which One Actually Speeds Devs Up?

A benchmark-based comparison of leading AI coding assistants to see which tools truly improve developer productivity, code quality, and shipping speed.

A benchmark-based comparison of leading AI coding assistants to see which tools truly improve developer productivity, code quality, and shipping speed.

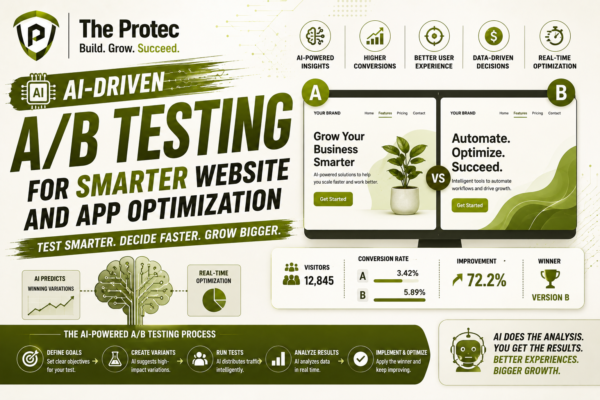

AI-driven A/B testing is changing how teams optimize websites and apps by speeding up experimentation, improving targeting, and turning data into faster conversion gains.

Learn Token Economics and explore the intricate relationship between AI token pricing, API rate limits, and how developers can optimize LLM cost control to avoid unexpected expenses.

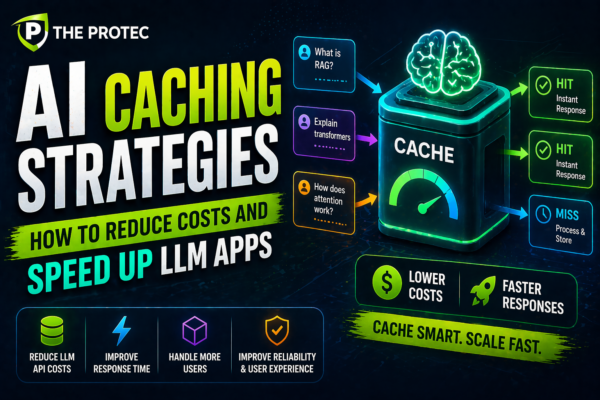

Explore how AI caching strategies like token and semantic caching optimize large language model applications, cutting costs and boosting response times.