Contents

- 1 Introduction

- 2 Understanding the Foundations: Prompt Engineering vs. Context Engineering

- 3 The AI Context Window: The Backbone of Context Engineering

- 4 Why Structuring Context Outperforms Prompt Writing

- 5 Applications of Context Engineering in AI Optimization

- 6 Tools and Techniques for Effective Context Engineering

- 7 Challenges and Future Directions

- 8 Frequently Asked Questions

- 9 Conclusion

Introduction

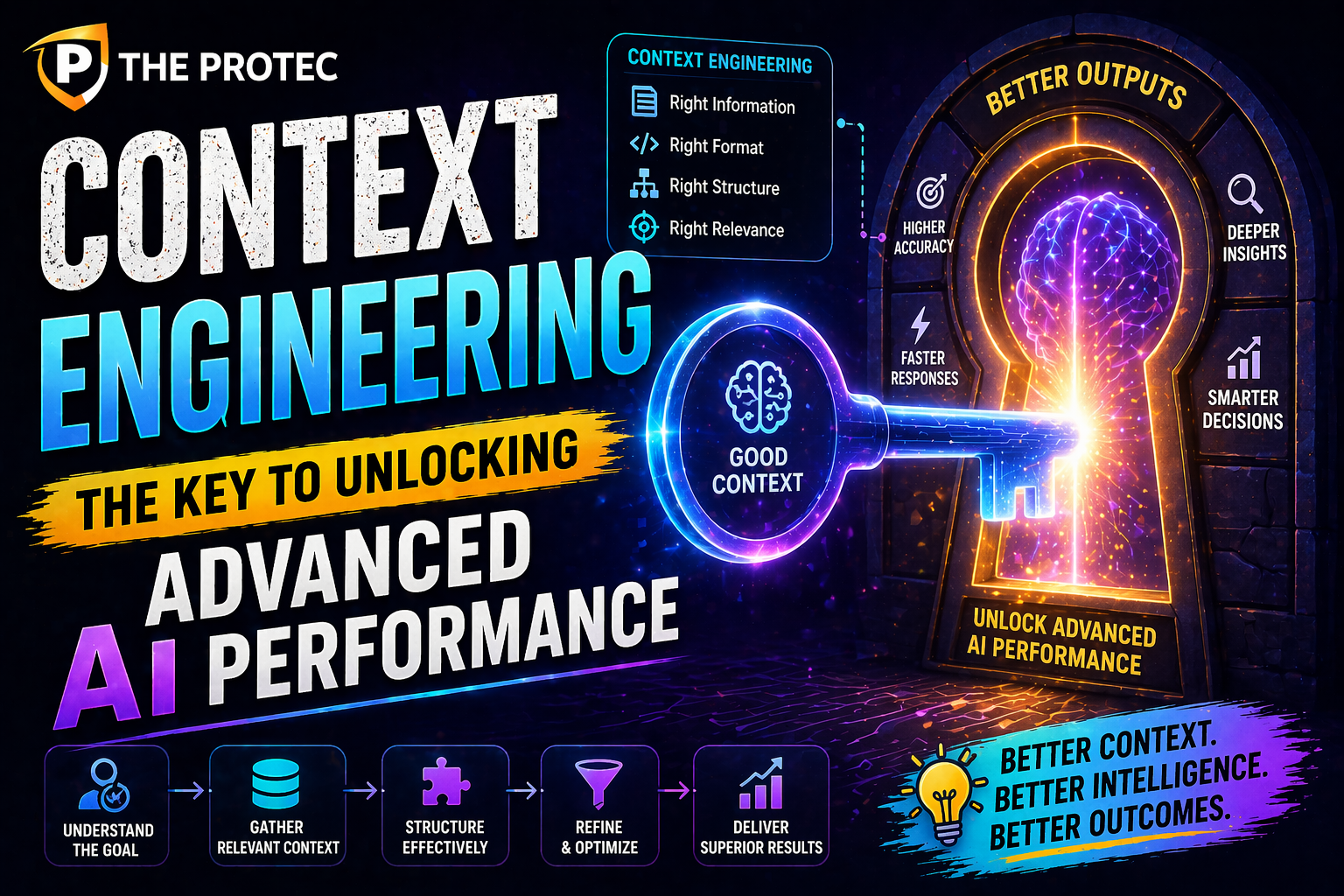

As artificial intelligence continues to evolve, so do the techniques that maximize its potential. For the past few years, prompt engineering has been the go-to skill for developers and AI practitioners to coax desired outputs from large language models (LLMs). However, the landscape is rapidly shifting. Enter context engineering: a sophisticated approach focusing on structuring the AI’s context window for far greater control and improved results. This article unpacks why context engineering is becoming indispensable in extracting the best from modern AI systems and how it far surpasses traditional prompt writing.

Understanding the Foundations: Prompt Engineering vs. Context Engineering

What is Prompt Engineering?

Prompt engineering involves crafting specific instructions or questions to guide an AI model’s response. It traditionally revolves around designing the exact wording, phrasing, and framing of the input prompt to elicit the preferred output. Early successes with models like GPT-3 demonstrated how nuanced prompts could significantly influence AI performance.

Why Context Engineering Emerges as the Next Evolution

While prompt engineering operates predominantly on the immediate prompt, context engineering focuses on the entire context window the complete set of input data a model processes at once. With advancements in AI models expanding context windows to thousands of tokens, harnessing this expanded input space is critical.

Context engineering involves strategically structuring, organizing, and optimizing the entire input whether it’s diverse documents, code, facts, or user histories to inform the AI model in a holistic manner. Instead of pushing and pulling through prompt wording alone, it configures how information is presented for maximized comprehension and output accuracy.

The AI Context Window: The Backbone of Context Engineering

The AI context window refers to the maximum amount of tokens an LLM can consider when generating responses. Modern large language models, such as GPT-4 and its successors, support context windows ranging from tens of thousands of tokens, enabling them to process extensive data in one go.

Why Size and Structure Matter

- Expanded capacity: A larger context window allows more background, prior conversation, or reference material to be included, enhancing response relevance.

- Efficient memory: By embedding key knowledge within the context window, the model doesn’t rely solely on distant training data or recall limitations.

- Reduced hallucination: A well-structured context helps prevent AI from fabricating information by grounding answers in provided data.

However, simply increasing token limits isn’t enough. Strategic organization is essential. Context engineering addresses how to assemble this window what to include, omit, or prioritize to unlock the model’s full capabilities.

Why Structuring Context Outperforms Prompt Writing

The Limits of Prompt Engineering in Modern AI

Prompt engineering excels when AI inputs are short and focused. But as real-world applications demand more complex, multi-turn conversations, or integration of large datasets, prompt engineering alone often hits its ceiling:

- Token constraints: Short prompts limit how much information can guide the AI.

- Fragile phrasing: Slight wording variations can cause drastic output changes, making prompt design unpredictable.

- Lack of memory: Prompts don’t inherently manage extended context or evolving user history.

How Context Engineering Steps In

Context engineering transforms the AI interaction by embedding the relevant data, facts, and context into a cohesive structure inside the context window. This approach harnesses:

- Hierarchical information layering: Organizing inputs by importance or logical flow improves model comprehension.

- Dynamic context updates: Continuously refreshing and reordering context data as conversations or tasks evolve enhances consistency.

- Contextual references and indexing: Linking data points within the window for easy recall and cross-referencing.

This sophisticated treatment improves the AI’s understanding and reduces dependency on prompt wording precision alone.

Applications of Context Engineering in AI Optimization

Enhanced Performance in Complex Tasks

Context engineering is particularly valuable in scenarios requiring multi-step reasoning, deep knowledge integration, or sustained dialogue. Examples include:

- Legal and financial analysis: Embedding laws, regulations, and case histories as structured context rather than just prompt text improves accuracy.

- Healthcare diagnostics: Integrating patient history, medical data, and research abstracts coherently supports better AI-assisted decisions.

- Code generation and debugging: Supplying entire codebases or documentation as context reduces context-switching errors.

Optimizing LLMs with Context Engineering

Beyond industry use cases, context engineering significantly contributes to the broader field of LLM optimization. By maximizing the quality and relevance of the input context, organizations can:

- Reduce inference time and compute by limiting unnecessary or redundant data in the window

- Improve model interpretability by clarifying input-output relationships

- Enhance fine-tuning strategies by layering curated context during training phases

Tools and Techniques for Effective Context Engineering

Contextual Embeddings and Retrieval Systems

Recent advancements in vector embeddings and retrieval augmented generation (RAG) enable embedding external knowledge dynamically into the context window. This technique lets AI access vast information without overloading the prompt, refining real-time responses.

Chunking and Summarization

To fit large datasets into limited context windows, content is often intelligently chunked and summarized, preserving core information while keeping input size manageable. This is critical in maintaining throughput and accuracy.

Automated Context Management Platforms

Emerging software platforms now help orchestrate context engineering workflows, applying AI-driven heuristics to structure and curate input context automatically based on user goals.

Challenges and Future Directions

Despite its transformative potential, context engineering faces hurdles such as:

- Context window limits: Even expanded windows are finite, requiring smart trade-offs.

- Data privacy and security: Integrating sensitive context must ensure compliance with regulations.

- Tooling maturity: The field needs better standardized tools and best practices for wide adoption.

Looking ahead, the synergy of growing context windows with sophisticated engineering methods promises AI models that can juggle richer, dynamic contexts bringing us closer to truly versatile and intelligent assistants.

Frequently Asked Questions

What exactly does context engineering mean in AI?

Context engineering involves organizing and structuring the entire input data that a large language model processes, focusing on how different pieces of information are arranged in the AI’s context window for the most effective reasoning and output generation.

How is context engineering different from prompt engineering?

Prompt engineering primarily focuses on carefully crafting the immediate input text given to the AI. Context engineering, on the other hand, manages the entire set of inputs including multiple documents, prior interactions, and data optimizing how all this information is combined and prioritized within the model’s context window.

Why is context engineering important with larger context windows?

Because modern AI models can process thousands of tokens at once, simply knowing what prompt to write isn’t enough. Context engineering exploits this expanded space by structuring data intelligently, resulting in more accurate, relevant, and consistent AI outputs that prompt engineering alone can’t achieve.

Conclusion

The rise of context engineering marks a pivotal shift in how we interact with and optimize AI language models. Rather than solely depending on prompt wording, the future lies in mastering the art of context structuring leveraging expansive context windows with precision. By embracing this approach, AI practitioners can unlock deeper, more reliable AI performance, capitalizing on the strengths of the latest LLMs and paving the way for increasingly sophisticated applications.

For continued learning on AI advancements, explore research on context window management and LLM optimization to deepen your understanding of these groundbreaking techniques.