Contents

- 1 Introduction

- 2 What Is LLMOps and Why Is It Essential?

- 3 The Evolution from MLOps to LLMOps

- 4 Core Components of an LLMOps Architecture

- 5 Practical Example: AI Deployment Pipeline with LLMOps

- 6 Why LLM Monitoring Is a Game-Changer

- 7 LLMOps Tools and Platforms

- 8 Challenges and Best Practices in Adopting LLMOps

- 9 Conclusion

- 10 Frequently Asked Questions (FAQ)

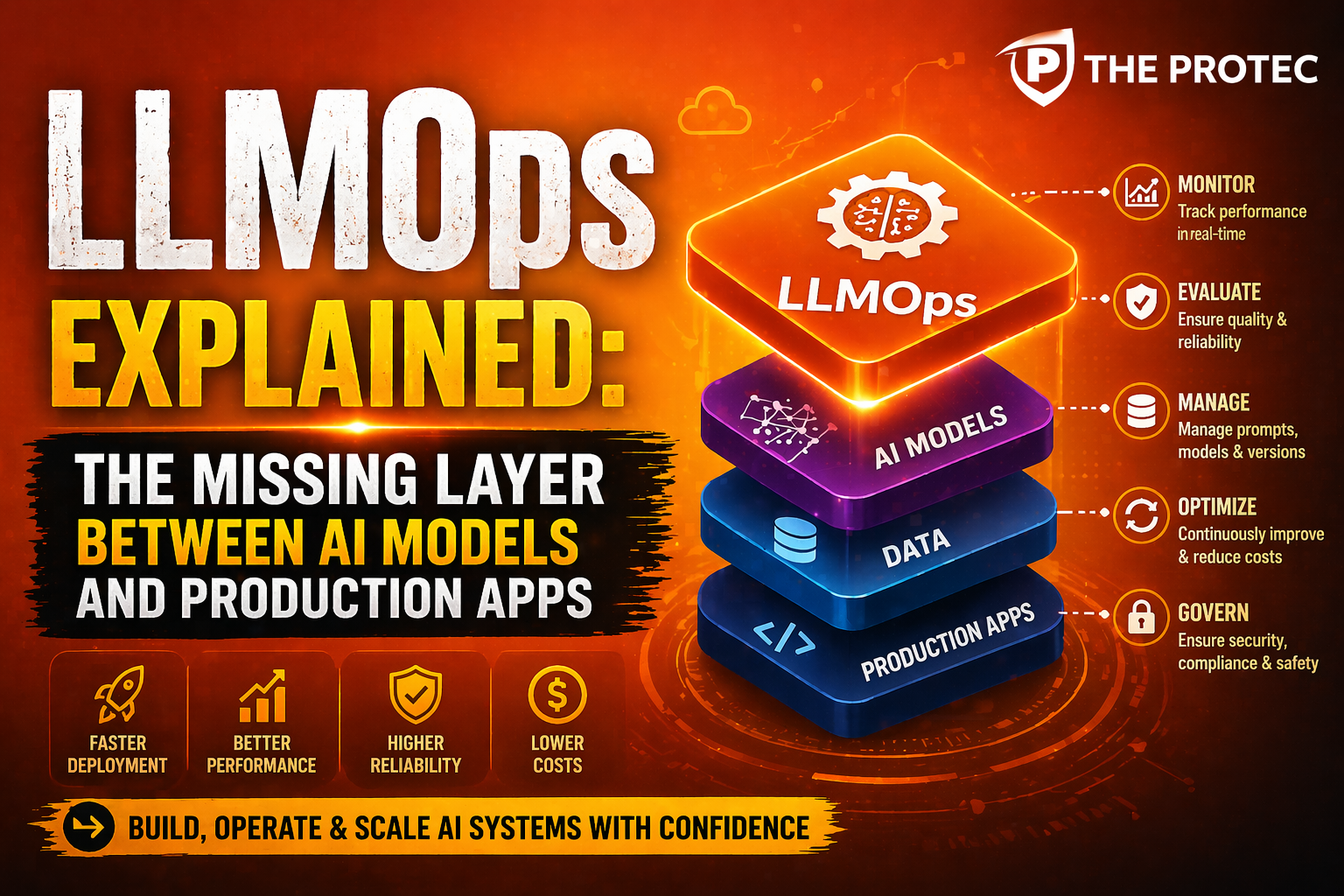

Introduction

The rapid advances in large language models (LLMs) have transformed AI applications, enabling breakthroughs in natural language understanding, generation, and interaction. However, managing these powerful models at scale presents unique challenges that traditional machine learning operations (MLOps) frameworks struggle to fully address. Enter LLMOps: a new discipline and the missing layer that connects AI models to reliable production applications. This article explores how LLMOps enhances AI deployment pipelines through specialized architectures and robust LLM monitoring techniques, making it indispensable for modern enterprises.

What Is LLMOps and Why Is It Essential?

LLMOps, shorthand for Large Language Model Operations, extends the concepts of MLOps to accommodate the distinct characteristics and operational demands of LLMs. While MLOps has focused on managing traditional machine learning models, LLMOps must address the complexities introduced by massive model sizes, dynamic behaviors, latency considerations, and prompt engineering requirements.

Unlike smaller models, LLMs often require heavy computational resources, fine-tuning, and complex orchestrations to deliver consistent, context-aware outputs in real-time applications. LLMOps represents the missing middleware layer that ensures the model lifecycle from fine-tuning to deployment, scaling, and monitoring is optimized for production use cases.

In essence, LLMOps fills a critical gap by:

- Enabling smooth integration of LLMs into scalable, reliable AI applications.

- Providing advanced monitoring to detect model drift, response quality, and latency issues.

- Supporting dynamic prompt and prompt template management.

- Streamlining deployment pipelines specifically optimized for LLM workloads.

- Enforcing compliance, security, and governance frameworks necessary for enterprise usage.

The Evolution from MLOps to LLMOps

MLOps has been instrumental in operationalizing classical machine learning models built on structured data and objective metrics, such as classification or regression accuracy. Typical MLOps practices include dataset versioning, model training pipelines, automated testing, continuous integration/continuous deployment (CI/CD), and monitoring keyed towards model performance and resource utilization.

However, LLMs introduce new challenges:

- Scale and latency: LLMs often comprise billions of parameters requiring sophisticated serving infrastructure and GPU/TPU orchestration.

- Unpredictable outputs: Unlike deterministic models, LLM responses may vary, necessitating contextual response validation.

- Prompt engineering: The input prompts themselves are part of the operational pipeline and require systematic versioning and optimization.

- Continuous learning: Fine-tuning or incorporating user feedback dynamically to keep the model relevant.

LLMOps evolves MLOps by introducing specialized layers to address these nuances effectively bridging between the model development lifecycle and real-world application demands.

Core Components of an LLMOps Architecture

An effective LLMOps architecture integrates several key components, orchestrated to streamline the AI deployment pipeline:

1. Model Management and Versioning

Given the frequent updates and fine-tuning of LLMs, managing versions becomes critical. LLMOps platforms emphasize robust model registries that track architecture changes, fine-tuning datasets, tokenizer updates, and selected hyperparameters.

2. Prompt Lifecycle Management

Since prompt design directly impacts outputs, LLMOps introduces tools for building, storing, testing, and optimizing prompt templates. This includes A/B testing prompts and adjusting context windows for specific applications.

3. Scalable Serving Infrastructure

LLMOps requires scalable, low-latency serving infrastructure optimized for GPU/TPU usage with autoscaling to manage fluctuating workloads. Integration with model parallelism and quantization techniques is often vital to reduce resource consumption.

4. LLM Monitoring and Alerting

Traditional MLOps monitoring focuses on metrics like loss curves and inference speed. LLMOps extends this to include:

- Output coherence and relevance evaluations through automated scoring.

- Detection of hallucinations or inappropriate content via specialized classifiers.

- Prompt and response latency monitoring to maintain SLA compliance.

- Tracking user feedback to identify emerging failure modes.

5. Compliance, Security, and Governance

Running LLMs in production demands strict processes to ensure data privacy, model fairness, and regulatory compliance. LLMOps platforms embed governance policies, access controls, and audit trails to meet enterprise standards.

Practical Example: AI Deployment Pipeline with LLMOps

To illustrate how LLMOps fits into modern AI deployment, consider the following practical pipeline example for an enterprise chatbot powered by an LLM:

Step 1: Model Fine-Tuning and Versioning

The base LLM from an open-source or proprietary provider is fine-tuned on domain-specific customer service transcripts. Model artifacts are stored in a versioned registry along with metadata capturing dataset details and training configurations.

Step 2: Prompt Engineering and Validation

Engineering teams develop prompt templates designed to guide the LLM’s responses according to company tone and policy. These prompts are versioned and tested against a validation set of queries to optimize response quality.

Step 3: Deployment with Scalable Serving

The fine-tuned model and prompt templates are deployed onto a managed serving platform capable of autoscaling and GPU acceleration. The serving layer ensures request routing, load balancing, and caching for efficiency.

Step 4: LLM Monitoring and Feedback Loop

During production, monitoring agents continuously evaluate response correctness, latency, and detect content anomalies. Logs and telemetry feed into dashboards with real-time alerts. When issues appear, automated triggers can redeploy fallback models or alert engineers.

Step 5: Continuous Improvement

User feedback and fresh interaction data feed back into the training pipeline, enabling iterative fine-tuning and prompt adjustments managed through the LLMOps platform’s workflow orchestration.

Why LLM Monitoring Is a Game-Changer

Monitoring large language models extends beyond traditional uptime and error tracking. Since the output quality can vary with subtle prompt changes or unseen input domains, LLM monitoring systems employ advanced techniques:

- Semantic similarity scoring: Automated checks comparing model answers against a knowledge base or prior-approved responses.

- Hallucination detection: Using secondary models or heuristics to identify fabricated or misleading responses.

- Bias and toxicity filtering: Real-time scanning for potentially harmful language with immediate mitigation.

- Inference time analytics: Monitoring latency spikes to maintain user experience standards.

These capabilities allow product teams to maintain trust and reliability when rolling out LLM-powered features to millions of users.

LLMOps Tools and Platforms

A growing ecosystem supports LLMOps workflows, including:

- TensorFlow Extended (TFX) adapted for LLM fine-tuning and deployment pipelines.

- Kubeflow for orchestrating scalable distributed training and serving on Kubernetes.

- Dedicated LLMOps platforms like Neptune.ai’s LLMOps tooling that focus on prompt management and advanced model monitoring.

- Open-source frameworks for prompt engineering and testing, such as LangChain, which integrate into the LLMOps ecosystem.

Challenges and Best Practices in Adopting LLMOps

While LLMOps addresses many pain points, enterprises face challenges including high infrastructure costs, skill gaps among development teams, and evolving regulatory landscapes. To successfully implement LLMOps, consider these best practices:

- Start Small: Pilot LLMOps concepts on limited use-cases to refine processes before enterprise-wide adoption.

- Cross-Functional Collaboration: Involve data scientists, software engineers, security, and product managers in the LLMOps lifecycle.

- Automate Extensively: Use CI/CD pipelines and automated monitoring to reduce manual overhead and ensure rapid incident response.

- Maintain Documentation and Audits: Ensure regulatory compliance with transparent model and data lineage records.

- Invest in Observability: Deep monitoring beyond traditional metrics enables early detection of subtle issues.

Conclusion

LLMOps represents the vital next step in operationalizing large language models, bridging the gap between experimental AI research and scalable, trustworthy production applications. By integrating dedicated model management, prompt engineering, specialized monitoring, and governance into the AI deployment pipeline, LLMOps empowers organizations to harness LLMs’ full potential at scale.

As enterprises increasingly rely on LLM-powered solutions, embracing LLMOps methodologies and tooling will be essential to delivering resilient, efficient, and compliant AI experiences.

Frequently Asked Questions (FAQ)

What differentiates LLMOps from traditional MLOps?

LLMOps extends MLOps by addressing challenges unique to large language models, such as managing massive model sizes, incorporating prompt lifecycle management, handling dynamic outputs, and implementing more advanced monitoring for hallucinations and toxicity.

How does prompt management fit within LLMOps?

Prompt management treats prompts as first-class artifacts that require versioning, testing, and optimization because prompt design directly affects LLM output quality. LLMOps workflows ensure prompt templates are tracked and refined systematically.

Can existing MLOps platforms support LLMOps workflows?

Many MLOps platforms provide foundational capabilities, but LLMOps often requires additional specialized tools for scalable serving, prompt engineering, and advanced monitoring. Platforms like Kubeflow or TFX can be extended, but dedicated LLMOps solutions are emerging to fully cover the unique needs.