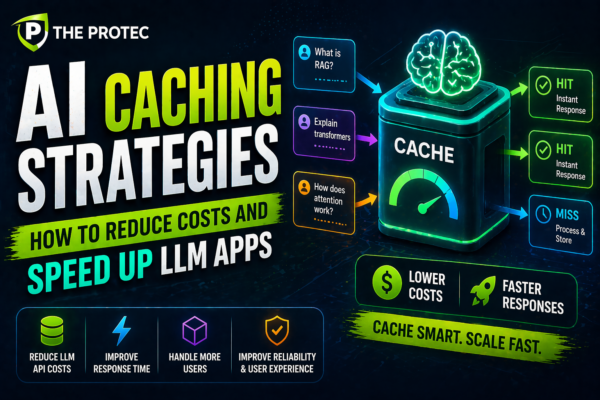

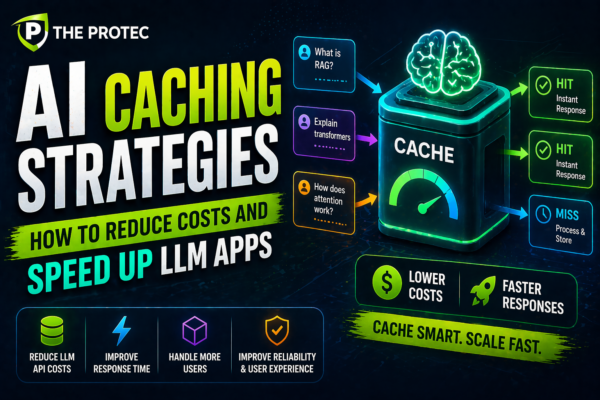

AI Caching Strategies: How to Reduce Costs and Speed Up LLM Apps

Explore how AI caching strategies like token and semantic caching optimize large language model applications, cutting costs and boosting response times.

Explore how AI caching strategies like token and semantic caching optimize large language model applications, cutting costs and boosting response times.