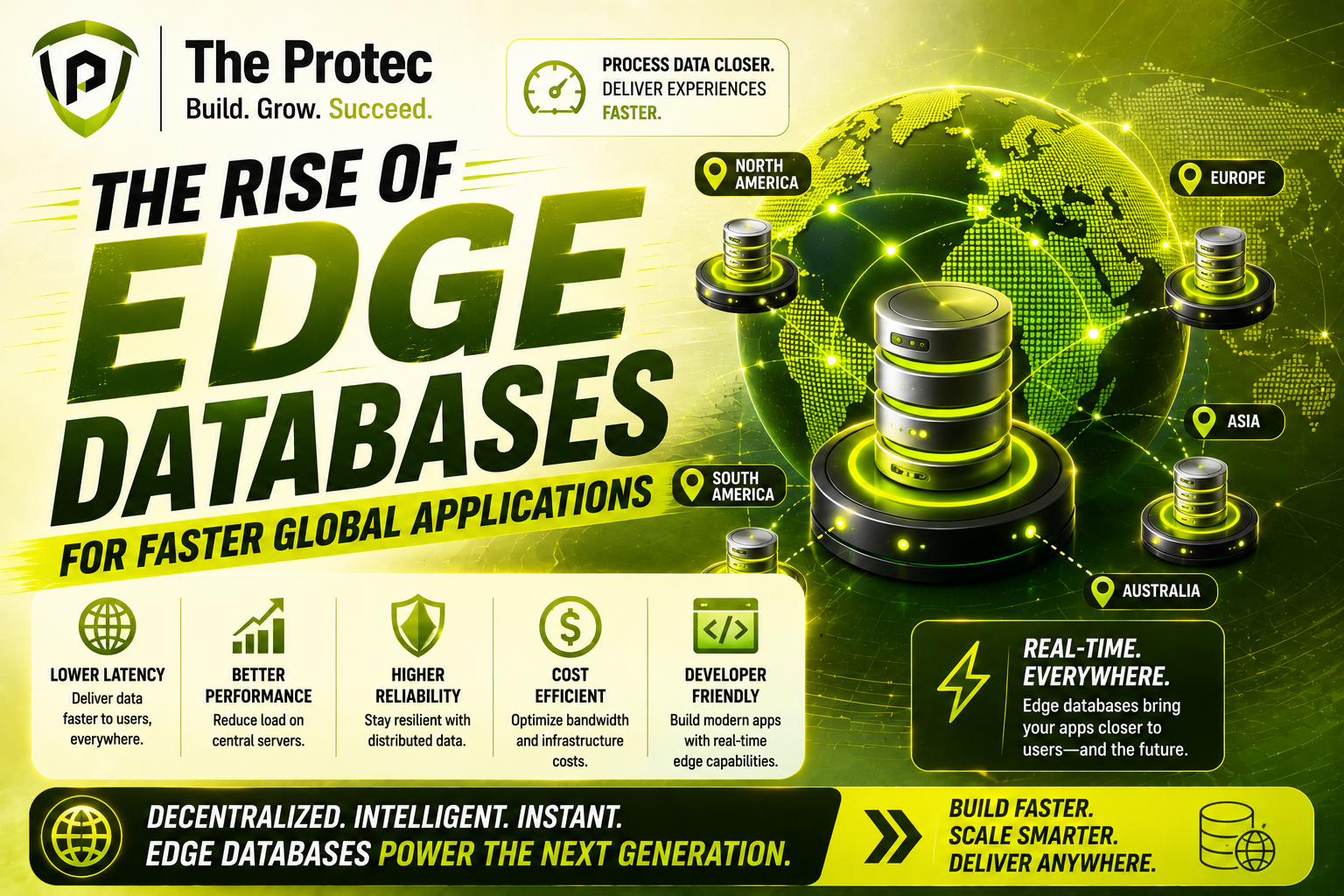

Introduction: Why Edge Databases Matter Now

Modern applications are no longer built for a single city, region, or data center. They serve users across continents, sync activity from mobile devices and IoT endpoints, power real-time collaboration, and respond instantly to changing conditions. In that environment, every extra millisecond matters. A traditional centralized database can become a bottleneck when users are far from the primary server, especially when applications need low latency data for live interactions, personalization, payments, or operational decisions.

This is where edge databases are reshaping the architecture of modern software. By moving data storage and querying closer to the point of use, edge databases reduce round-trip time, improve resilience, and make global applications feel local. They are part of a wider shift toward distributed databases, but with a sharper focus on speed at the network edge. Instead of treating the database as a distant core system, developers increasingly deploy data layers across regions, devices, and edge nodes so applications can read, write, and react faster.

The rise of edge databases is not just a performance trend. It reflects a broader change in how digital products are designed. Users expect instant updates, always-on availability, and seamless experiences no matter where they are. Businesses want to process data closer to where it is generated, reduce bandwidth costs, and keep services running even during connectivity issues. As global traffic grows and real-time use cases multiply, edge databases are becoming essential infrastructure for modern applications.

What Are Edge Databases?

Edge databases are databases designed to run close to users, devices, or application endpoints rather than only in a centralized cloud region. They can operate on edge servers, distributed clusters, branch locations, mobile devices, or other local nodes. The core idea is simple: place data where it is needed most so applications can access it with minimal delay.

Unlike traditional databases that depend on a single primary location, edge databases often use replication, synchronization, or distributed consensus to keep data available across multiple sites. Some are built for strong consistency across nodes, while others prioritize availability and local responsiveness with eventual consistency or conflict resolution strategies. The right approach depends on the use case, but the common goal is faster access to low latency data.

Edge databases are often confused with generic distributed databases, and the two do overlap. However, edge databases emphasize locality. They are tuned for environments where users are geographically dispersed, network quality varies, and applications must continue working even when the connection to the core cloud is slow or interrupted. That makes them especially relevant for retail, logistics, gaming, fintech, media, and connected devices.

Why Latency Has Become a Business Problem

Latency used to be a technical metric that only infrastructure teams worried about. Today, it directly affects conversion rates, engagement, customer satisfaction, and revenue. When a user taps a button, opens a dashboard, submits a payment, or joins a live session, they expect the app to respond immediately. If data must travel halfway around the world before the server can answer, the experience degrades quickly.

In global applications, latency compounds across multiple steps. A single request may involve authentication, database reads, cache checks, analytics events, and third-party calls. If the database is far away, every dependent operation slows down. For applications with a real-time component, such as multiplayer gaming, live commerce, ride dispatch, fraud detection, or collaborative editing, even small delays can cause visible problems.

Businesses also face the cost of serving users from centralized infrastructure. Cross-region data transfer can increase bandwidth expenses and create operational complexity. In addition, centralized systems are more vulnerable to regional outages or network congestion. Edge databases help address these issues by reducing the distance between users and the data they need.

How Edge Databases Reduce Latency in Global Apps

The main advantage of edge databases is simple: shorter distance means faster response. When data is stored closer to the user, read and write operations complete more quickly because requests do not have to travel as far. This improvement is especially noticeable for applications with geographically distributed audiences.

For example, imagine a customer in Singapore interacting with an application whose primary database is in North America. Every request must cross multiple network hops and undersea routes, adding unavoidable delay. If that application uses an edge database replica or local node in Asia-Pacific, the request can be served much faster. The application feels responsive, and the user experience improves immediately.

Edge databases also help reduce the impact of network variability. In real-world environments, latency is not constant. Congestion, packet loss, routing changes, and regional outages can all slow down centralized access. By keeping a local copy of relevant data at the edge, applications can continue to serve users even when the connection to the core system is unstable.

Another key benefit is write locality. Some edge database architectures allow certain writes to happen locally and then sync changes upstream or across peers. This avoids forcing every transaction to wait for a faraway primary node. For applications that need quick updates, such as inventory checks, user activity tracking, or device telemetry, that can make a major difference.

In practice, the best performance gains come from a combination of strategies:

- Local reads from edge replicas or shards

- Selective write handling at the nearest node

- Smart synchronization to keep data consistent

- Integration with caches and content delivery layers

- Placement policies that align data with user geography

Edge Databases vs. Traditional Distributed Databases

Distributed databases have been around for years, but edge databases represent a more specialized evolution. Traditional distributed databases focus on spreading data across multiple machines or regions to improve scalability and reliability. Edge databases take that concept further by optimizing for locality and responsiveness at the network edge.

In a standard distributed database setup, nodes may still be anchored around a core cloud region or controlled data center environment. Edge databases, by contrast, are designed to operate in environments where physical distance, intermittent connectivity, and local autonomy matter as much as scale. That makes them a better fit for modern applications that must serve users near instantly across many locations.

Another difference is operational intent. Distributed databases often emphasize global consistency, replication, and fault tolerance. Edge databases often balance consistency against speed, allowing applications to define where strict synchronization is necessary and where local autonomy is acceptable. This flexibility is important for low latency data workloads because not every piece of information needs to be globally synchronized before it can be useful.

That said, the two models are converging. Many modern platforms combine distributed database principles with edge deployment patterns, enabling developers to choose the right mix of consistency, locality, and resilience. The strongest solutions are those that make the edge feel like a natural extension of the data layer rather than a separate system.

Current Trends Driving Edge Database Adoption

Several technology shifts are accelerating demand for edge databases. One major factor is the growth of real-time applications. Users now expect live updates in chat, commerce, gaming, logistics, and analytics. These workloads are difficult to serve efficiently from a single central database, especially at global scale.

Another major trend is the expansion of AI-powered applications that depend on immediate access to context. Recommendation engines, fraud models, copilots, and agentic workflows often need fresh data at the moment of interaction. If the database is too far away, the entire experience slows down. Edge databases help keep relevant context close to the decision point.

IoT and connected devices are also pushing the edge forward. Factories, vehicles, retail systems, sensors, and smart infrastructure generate enormous streams of data. Sending every event to a distant cloud region is often inefficient and sometimes impractical. Edge databases let organizations filter, store, and act on data locally before synchronizing what matters upstream.

Security and data sovereignty concerns are another driver. Many organizations need to control where data resides and how it moves across borders. Edge deployments can help meet local compliance requirements by keeping sensitive data within specific geographic zones while still supporting a global application footprint.

Finally, cloud-native development has made the edge more accessible. Container orchestration, lightweight runtimes, improved replication tools, and better observability have made it easier to deploy database functionality outside traditional centralized environments. As a result, edge databases are moving from niche use cases into mainstream architecture planning.

Use Cases Where Edge Databases Deliver the Biggest Impact

Edge databases are not the right answer for every workload, but they are highly valuable in scenarios where speed and locality matter. One of the clearest examples is global e-commerce. Product availability, pricing, session state, and checkout experiences all benefit from low latency data access. A customer should not have to wait for a faraway database to confirm stock or process a cart action.

Another strong use case is collaborative software. Shared documents, design tools, and team workspaces require responsive updates from users in many locations. Edge databases can help reduce the lag between a user action and the appearance of that change for collaborators.

Gaming is another obvious fit. Multiplayer games rely on fast state synchronization, matchmaking, inventory updates, and session management. Edge databases can improve responsiveness and reduce jitter, especially when players are spread across regions.

In logistics and transportation, edge databases support route updates, fleet telemetry, dispatch decisions, and warehouse operations. These systems often need to keep working even when connectivity is inconsistent. Local data handling reduces dependence on a distant core system.

Financial services also benefit from edge patterns, especially for fraud detection, transaction monitoring, and customer-facing apps. While some financial workloads require central control, many interaction layers can gain from faster local reads and carefully designed replication.

Media and content platforms use edge databases to personalize recommendations, manage viewing state, and update engagement metrics quickly. The result is a smoother experience for users spread across multiple regions.

Challenges and Trade-Offs to Consider

Edge databases solve latency problems, but they introduce design trade-offs. The most important is data consistency. When data is stored and updated in multiple locations, keeping every copy perfectly synchronized can be difficult. Developers must decide whether their application can tolerate slight delays in propagation or whether it needs strong, immediate consistency.

Operational complexity is another challenge. Managing data across many edge nodes requires careful monitoring, deployment automation, failover planning, and security controls. Without strong tooling, the edge can become difficult to maintain.

There is also the question of conflict resolution. If two edge locations accept updates to the same record at nearly the same time, the system needs a clear strategy to reconcile those changes. That may involve versioning, timestamps, leader election, or application-level rules.

Cost can be a factor as well. While edge databases can reduce bandwidth and improve performance, they may require additional infrastructure, replication logic, and observability investment. The architecture must justify itself by delivering measurable gains in user experience or operational efficiency.

For these reasons, the best implementations start with specific use cases rather than broad assumptions. The edge should be applied where latency reduction has clear value, not simply because it is fashionable.

Best Practices for Designing with Edge Databases

To get the most from edge databases, teams should design with data locality in mind from the start. That means identifying which data needs to be fast, which data must be globally shared, and which data can be synchronized asynchronously.

Start by classifying workloads into categories such as read-heavy, write-heavy, session-based, or event-driven. Not every table or dataset needs edge placement. In many applications, only a subset of data benefits from being close to users. A selective approach often delivers the best balance of performance and manageability.

It also helps to define clear consistency requirements. Some data can be eventually consistent, while other operations, such as payments or authorization changes, may require stricter guarantees. The architecture should reflect those differences rather than forcing every data type into the same model.

Monitoring is essential. Teams should track latency, replication lag, conflict rates, cache hit rates, and regional performance separately. Without visibility, it is hard to know whether edge placement is improving the user experience or simply adding complexity.

Security should be built in from the beginning. Edge nodes often expand the attack surface, so encryption, access control, secret management, and audit logging must be consistent across regions. Data protection policies should also account for local regulations and residency requirements.

Finally, test under realistic conditions. Simulated latency, regional failover, and degraded network scenarios reveal how well the architecture performs when it matters most. A design that works in a single lab region may behave very differently in a truly global environment.

The Future of Low Latency Data at the Edge

The future of modern application architecture is increasingly distributed, intelligent, and edge-aware. As more businesses build global products, the demand for low latency data will continue to rise. Edge databases are positioned to play a central role in that evolution because they bring the database closer to the user without abandoning the benefits of distributed systems.

We are likely to see tighter integration between edge databases, streaming platforms, application runtimes, and AI inference layers. This will make it easier to process events locally, make decisions faster, and sync only the data that truly needs to travel. In many cases, the edge will become the default place for short-lived, high-value, or interaction-sensitive data.

At the same time, tooling will continue to improve. Better observability, automated placement, conflict management, and hybrid consistency models will reduce the friction of adopting edge architectures. As these capabilities mature, edge databases will become less of a specialized choice and more of a standard option for global applications.

FAQ

What is the main benefit of edge databases?

The main benefit is reduced latency. By storing and processing data closer to users or devices, edge databases make applications respond faster and feel more local, especially for global audiences.

Are edge databases the same as distributed databases?

Not exactly. Edge databases are a type of distributed database architecture, but they are specifically optimized for locality, low latency data access, and operation near users or devices at the network edge.

Do edge databases replace centralized databases?

No. In most cases, they complement centralized systems. Core databases still often handle system-of-record data, while edge databases manage latency-sensitive reads, local writes, or regional workloads.

Are edge databases suitable for all applications?

No. They are most useful for applications that serve global users, require real-time interaction, or need to continue operating with limited connectivity. Simpler applications may not need the added complexity.

How do edge databases help with global app performance?

They reduce the physical and network distance between the user and the data. That lowers round-trip time, improves responsiveness, and creates a better experience across regions and devices.

Conclusion

Edge databases are becoming a defining part of modern application architecture because they solve one of the internet’s most persistent problems: distance. When users, devices, and services are spread across the globe, centralizing every database operation in one location creates unnecessary delay. By bringing data closer to where it is needed, edge databases unlock faster interactions, better resilience, and more scalable global experiences.

For teams building real-time products, connected systems, or international platforms, the case for edge databases is stronger than ever. They are not simply a performance upgrade; they are a practical response to how applications are used today. As businesses continue to demand low latency data and seamless performance across borders, edge databases will remain at the center of that transformation.

Those who design carefully, choose the right consistency model, and deploy the edge where it adds measurable value will be best positioned to deliver the fast, dependable experiences modern users expect.

For a deeper technical overview of distributed systems and edge architecture concepts, see the Cloud Native Computing Foundation’s resources on distributed applications at https://www.cncf.io/ and Cloudflare’s edge computing insights at https://www.cloudflare.com/edge-computing/.