Introduction

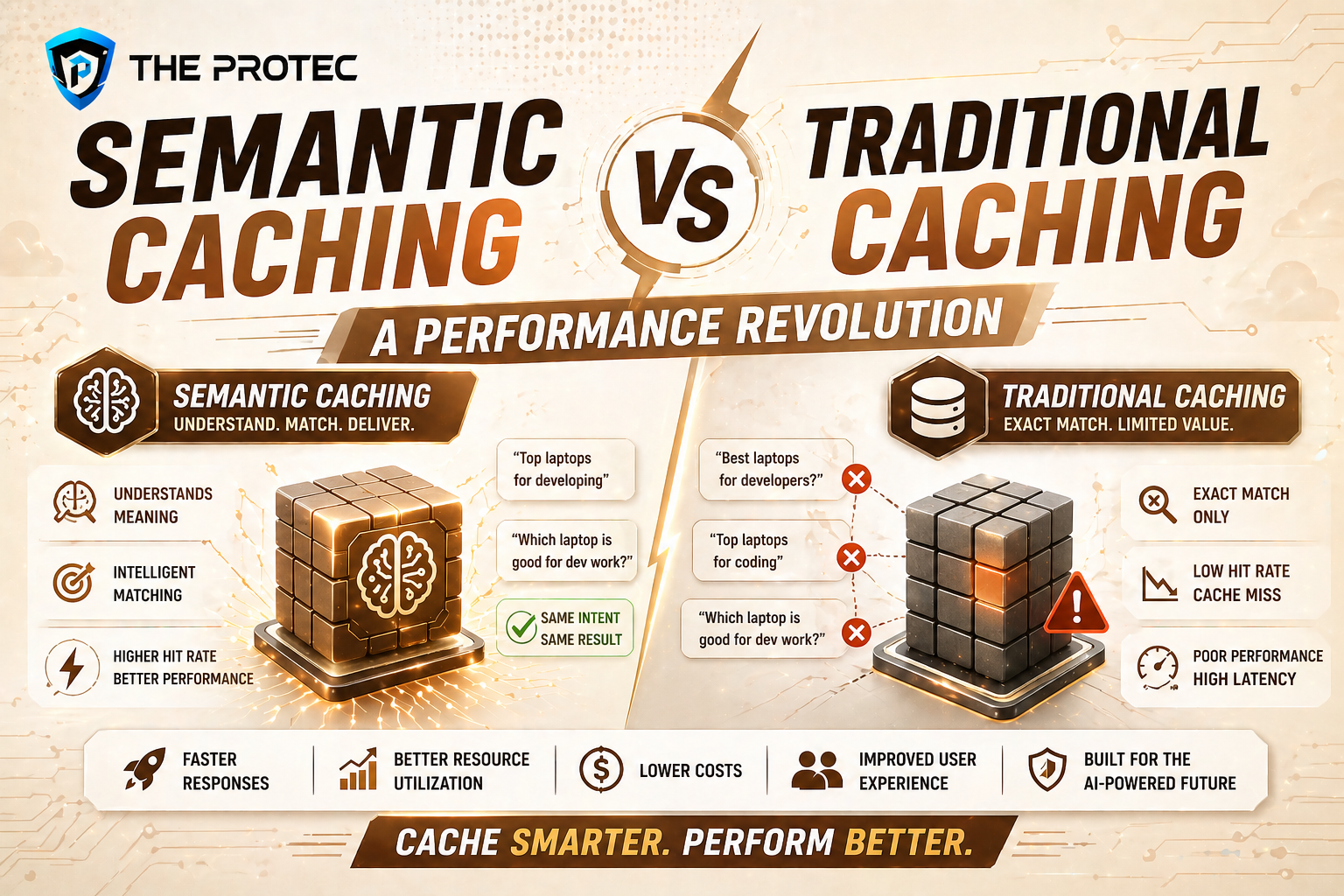

As artificial intelligence and large language models (LLMs) become foundational components of modern applications, the demand for faster, more efficient data retrieval mechanisms has surged. Caching, a strategy designed to improve data access speeds, is undergoing a transformation with the advent of semantic caching a technique that leverages context and meaning rather than simple key-value storage. This article dives deep into the differences between semantic caching and traditional caching, showcasing how semantic caching is redefining AI performance optimization and LLM caching through innovative benchmarks and implementation strategies.

Understanding Traditional Caching

Traditional caching primarily relies on storing explicit data that has been previously requested or computed, using direct key-value pairs. When a system queries data, it first checks the cache for an exact key match. If found, the cached result avoids expensive operations like database access or model inference, resulting in quicker response times.

Key Characteristics of Traditional Caching

- Exact Match Storage: Data is retrieved using exact input keys, limiting cache hits to identical queries only.

- Simple Lookup: The retrieval process is fast but depends entirely on previous exact queries.

- Cache Miss Penalty: Non-matching queries will always bypass the cache, leading to potentially higher latency.

- Limited Context Awareness: The cache stores raw data without semantic interpretation.

Limitations in AI and LLM Use Cases

While traditional caching works well for repeated identical queries, it falls short for dynamic, context-rich AI tasks where input can vary slightly but needs semantically similar responses. For example, slight rephrasing or related queries in LLM usage lead to cache misses despite underlying semantic overlap.

What Is Semantic Caching?

Semantic caching takes a fundamentally different approach by storing and retrieving data based on the meaning or context behind queries and results, rather than exact string matches. This technique taps into natural language understanding and embedding representations to compare query semantics to cached entries.

Core Concepts Behind Semantic Caching

- Embedding-Based Storage: Data and queries are transformed into vector embeddings using advanced language models, capturing semantic signatures.

- Approximate Matches: Instead of binary hit/miss results, the cache returns answers for semantically similar queries using nearest neighbor search.

- Context-Aware Results: The system understands relationships and variations within queries, enabling more flexible reuse.

- Dynamic Adaptation: Caches are updated and curated continually to maintain relevance to evolving query landscapes.

Why Semantic Caching Shines for AI and LLM Optimization

Since LLMs often output similar responses for related queries, semantic caching dramatically improves hit rates by recognizing contextual overlap rather than relying on strict equality. This optimization reduces redundant processing, lowers inference costs, and speeds up response times a game changer for AI-driven platforms.

Benchmarking Semantic Caching vs Traditional Caching

Recent studies and real-world implementations have begun quantifying the performance improvements delivered by semantic caching compared to traditional methods.

Cache Hit Rate Improvements

- Traditional Caching: Hit rates hover between 10-30% depending on query repeatability.

- Semantic Caching: Hit rates jump to 50-70% due to recognition of related queries and contextual similarities.

Latency Reduction

Systems equipped with semantic caching have demonstrated 30-60% reductions in average query latency over traditional caching. This is crucial for AI applications requiring near-real-time responses.

Resource Efficiency

By avoiding redundant LLM inference calls for semantically similar queries, semantic caching can reduce GPU and CPU usage by up to 40%, driving lower operational costs and environmental impact.

Case Study: Semantic Caching in Dialogue Systems

A leading AI-powered customer service platform integrated semantic caching to handle variant user inputs more effectively. The result was a 55% increase in cache hits and a 45% boost in throughput without any compromise in answer quality.

Implementation Strategies for Semantic Caching

Successfully implementing semantic caching requires sophisticated infrastructure and understanding. Here are key approaches:

1. Generating Semantic Embeddings

Embedding generation engines such as OpenAI’s embeddings API or open-source models like Sentence-BERT provide vector representations for inputs and outputs. The choice depends on latency requirements, accuracy, and cost.

2. Vector Indexing and Search

Unlike traditional caching, semantic caching uses approximate nearest neighbor (ANN) algorithms for fast retrieval of semantically close entries in the high-dimensional vector space. Popular tools include Pinecone, FAISS, and Annoy.

3. Cache Update and Eviction Policies

Because semantic similarity is continuous rather than discrete, designing effective cache eviction controls involves balancing freshness, diversity, and reuse potential. Hybrid heuristics and learned policies are increasingly used.

4. Handling Ambiguity and Relevance

Semantic caches must calibrate similarity thresholds carefully to avoid false hits that degrade output quality, especially for critical AI decisions.

Challenges and Considerations

- Computational Overhead: Embedding and ANN search introduce extra computation, though this is often offset by reduced expensive model queries.

- Complexity in Implementation: Semantic caching requires expertise in machine learning, vector search, and system design.

- Cache Consistency: Evolving language models and APIs may change embedding distributions; cache invalidation strategies must be robust.

- Data Privacy: Storing semantic embeddings might expose latent data patterns needing careful management under compliance regulations.

Future Trends in Semantic Caching

The growing scale and complexity of AI deployments are fueling rapid innovations in semantic caching, such as:

- Hybrid Caching Models: Combining traditional exact-match caches with semantic layers to optimize resource use.

- Adaptive Learning: Self-tuning caches that refine retrieval strategies based on runtime feedback.

- Cross-Modal Semantic Caches: Extending semantic caching to images, audio, and multimodal data for broader AI ecosystems.

FAQ

What types of AI applications benefit most from semantic caching?

Applications that handle natural language queries, recommendation engines, conversational AI, and any use case where inputs have variability but similar intent see the greatest performance improvements from semantic caching.

How does semantic caching improve Large Language Model caching?

Semantic caching enhances LLM caching by indexing query and response meaning rather than exact sequences, enabling reuse of computations for related prompts and dramatically improving cache hit rates and latency.

Is semantic caching compatible with cloud-native AI deployments?

Yes. Semantic caching frameworks can be integrated with cloud services. Managed vector databases and serverless embedding APIs facilitate scalable and cost-effective semantic caching architectures.

Conclusion

The transition from traditional caching to semantic caching marks a significant leap in AI performance optimization, particularly for LLM-driven systems. By understanding and implementing semantic caching strategies, developers and organizations can unlock faster response times, lower operational costs, and more intelligent reuse of computational resources. While challenges persist, ongoing advancements in vector search technology and AI embeddings promise a future where caching is no longer just about raw data storage but about meaningful data understanding ushering in a true performance revolution.

For further reading and advanced techniques on vector databases and caching strategies, consider exploring resources like Pinecone’s semantic caching guide.