Introduction

As artificial intelligence APIs become increasingly embedded in applications, understanding the nuances of AI rate limits and token economics is crucial for developers aiming to optimize costs. While leveraging large language models (LLMs) offers remarkable capabilities, behind the scenes a complex pricing structure based on tokens combined with strict API rate limits can lead to unexpected expenses if not managed carefully.

This article dives deep into current pricing models and optimization strategies for AI token pricing and API rate limits, shedding light on the hidden factors that impact the total cost of integrating AI services.

Understanding AI Token Pricing

AI token pricing is fundamentally linked to the concept of tokens, which are pieces of text the AI processes. Unlike traditional billing models that charge per API call or per hour, many AI providers now price based on the number of input and output tokens used. This model better aligns with the computational resources required but introduces complexity in cost estimation.

What is a Token?

A token is roughly equivalent to a word or subword unit, though it can vary depending on the tokenizer used. For example, “Hello, world!” is split into several tokens such as 4Hello4, , and world! Different languages and model tokenizers break down text differently, impacting token usage and consequently cost.

Pricing Models Based on Tokens

Leading AI platforms charge per 1,000 tokens processed. This includes both prompt tokens (the input text to the model) and completion tokens (the AI’s generated output). For instance, a pricing tier might be $0.03 per 1,000 tokens for prompt inputs and $0.06 per 1,000 tokens for generated completions.

This split pricing strategy reflects the processing intensity of generating output compared to reading input, but it means developers must consider both sides of token consumption when forecasting budgets.

Token Pricing Variability by Model

Different LLM models often exhibit different token prices. Advanced models that provide superior accuracy and context handling usually come at higher token rates. Smaller or optimized models may offer cheaper token pricing but with trade-offs in performance or capabilities.

This variability means developers need to strategically select models that strike the right balance between cost and functionality, particularly as usage scales.

API Rate Limits: More Than Just Throughput Control

API rate limits define how many requests (or tokens processed) can be made within a specified timeframe—per minute, hour, or day. These limits are designed to protect service stability and manage operational load, but they have implications far beyond throughput.

Types of Rate Limits

- Request-based Limits: How many API calls can be made per period.

- Token-based Limits: Total number of tokens processed per period.

- Concurrency Limits: How many simultaneous API requests are allowed.

Impact on Application Design and Costs

Rate limits force developers to design mechanisms such as request batching, queueing, or fallback logic. If applications exceed these rates, they may face throttling, causing delays or errors.

Moreover, inefficient use of tokens—such as overly verbose prompts or unnecessarily long completions—can quickly consume the token-based rate limit, leading developers to hit unexpected ceilings and potentially pay for overages or upgrade to costlier tiers.

The Hidden Costs Developers Often Overlook

While token pricing and rate limits are transparent on paper, the real-world cost implications often surprise developers due to:

1. Prompt Engineering Inefficiencies

Crafting prompts that extract desired responses without redundancy is a subtle art. Poorly optimized prompts can consume far more tokens than necessary, inflating costs.

2. Uncontrolled Token Generation

Allowing unrestricted token output can increase completion token usage exponentially. For example, setting a high maximum tokens parameter or using verbose response formats can multiply costs.

3. Scaling Without Monitoring

As applications scale user base or functionality, token consumption often grows faster than anticipated, sometimes due to hidden API calls in background tasks or retries caused by hitting rate limits.

4. Overprovisioning API Plans

Without granular monitoring, developers may purchase higher API plans preemptively. This stockpiling of capacity without usage control can inflate expenses unnecessarily.

Strategies for Effective LLM Cost Control

To tackle the intertwined challenges of AI token pricing and API rate limits, developers can adopt several best practices:

1. Optimize Prompt Design

Concise and carefully structured prompts reduce token waste. Techniques include:

- Using placeholders or templates for variable inputs

- Removing redundant information

- Employing system instructions to limit verbosity

2. Limit Maximum Token Outputs

Explicitly configuring the max_tokens parameter prevents runaway generation. Setting realistic limits aligned with desired output length helps control completion token costs.

3. Implement Token Usage Monitoring and Alerts

Many AI platforms provide dashboards or API metrics endpoints. Use these to track daily token consumption and set alerts before reaching limits.

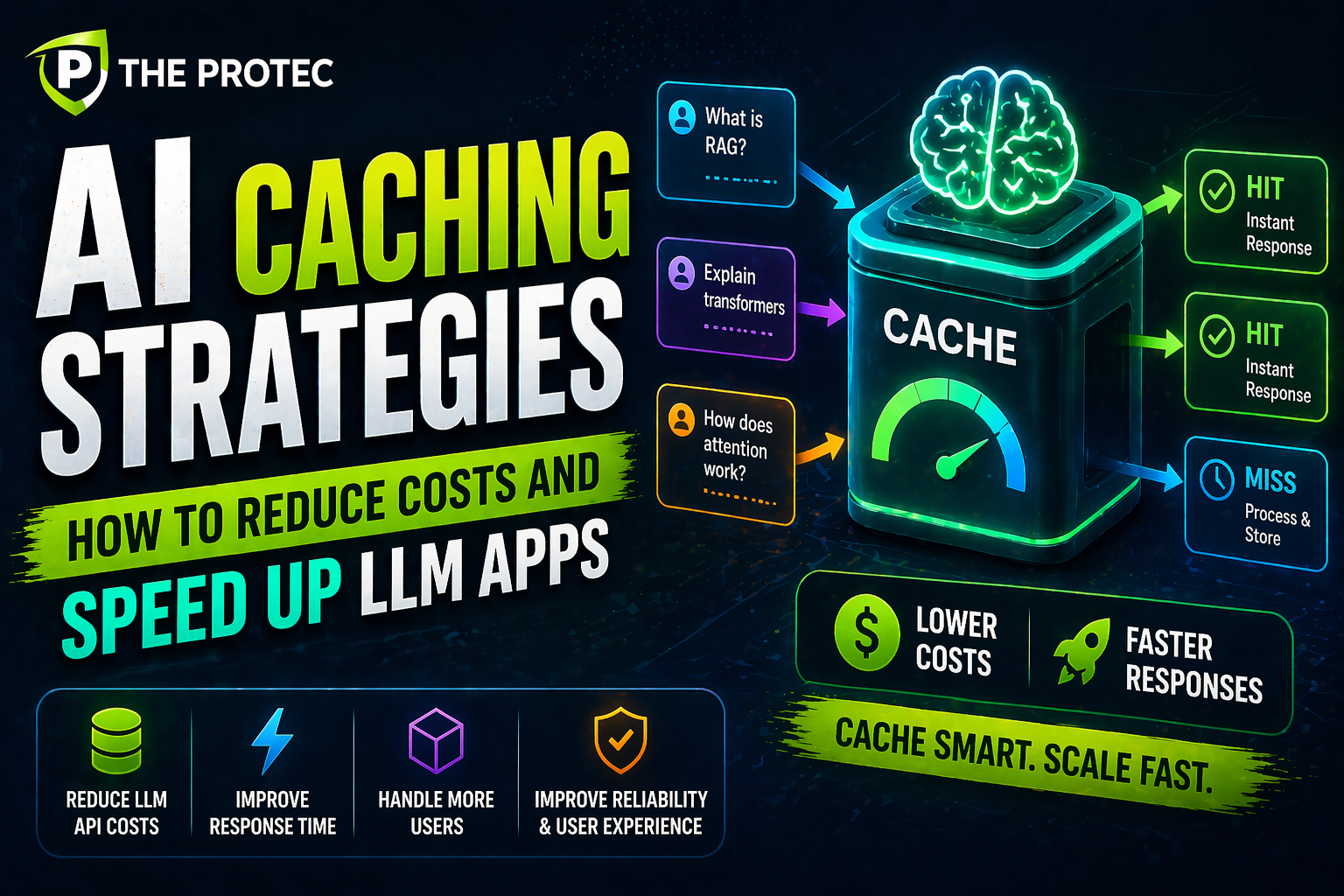

4. Use Caching and Reuse Strategies

Frequently requested outputs or similar queries should leverage caching to avoid repeated API calls, saving tokens and reducing rate limit strain.

5. Batch API Requests

Whenever possible, batch multiple queries into single API calls. This technique can improve efficiency and reduce overhead.

6. Choose Appropriate Models

Match model capabilities with task requirements. Consider using smaller models for less critical queries while reserving expensive, powerful models for high-value interactions.

Latest Trends in Token Pricing and Rate Limit Management

Recent developments emphasize enhanced flexibility and developer control:

- Fine-Grained Rate Limits: Some providers now offer customizable rate limits based on usage patterns or user tiers, helping balance performance and cost.

- Dynamic Token Pricing: Tiered or volume discounts encourage large-scale adoption while maintaining profitability for providers.

- AI Usage Analytics Platforms: Emerging third-party tools integrate with AI APIs to provide advanced token usage insights, anomaly detection, and cost forecasting.

FAQ

Q1: How can developers estimate AI costs before deployment?

Most AI providers offer token calculators or pricing simulators. Combining these with realistic usage projections, including prompt complexity and expected output length, provides a good cost estimate. Additionally, trial usage in a staging environment with monitoring helps refine estimates.

Q2: What happens if my application exceeds API rate limits?

Exceeding rate limits typically results in throttled requests—receiving 429 HTTP status codes—which can degrade user experience. Some providers may charge overage fees or temporarily block API access. Implementing retries with exponential backoff or queueing helps mitigate this.

Q3: Are there alternatives to token-based pricing?

Some AI providers still offer flat-rate or subscription-based pricing, but these models are less common for LLMs. Token-based pricing remains dominant as it scales with actual usage, offering fairer billing aligned with resource consumption.

Conclusion

AI token pricing and API rate limits form the backbone of LLM cost structures, yet their impact often goes underappreciated during development. Understanding the nuances of token economics and implementing proactive cost control strategies empower developers to build scalable, efficient AI applications without unwelcome financial surprises.

As AI continues evolving, staying informed about emerging pricing models and tools will be key to unlocking AI’s full potential while maintaining sustainable operating budgets.

For a detailed understanding of tokenization, see the OpenAI API documentation.