Introduction: The Rise of Intelligent Smartphones

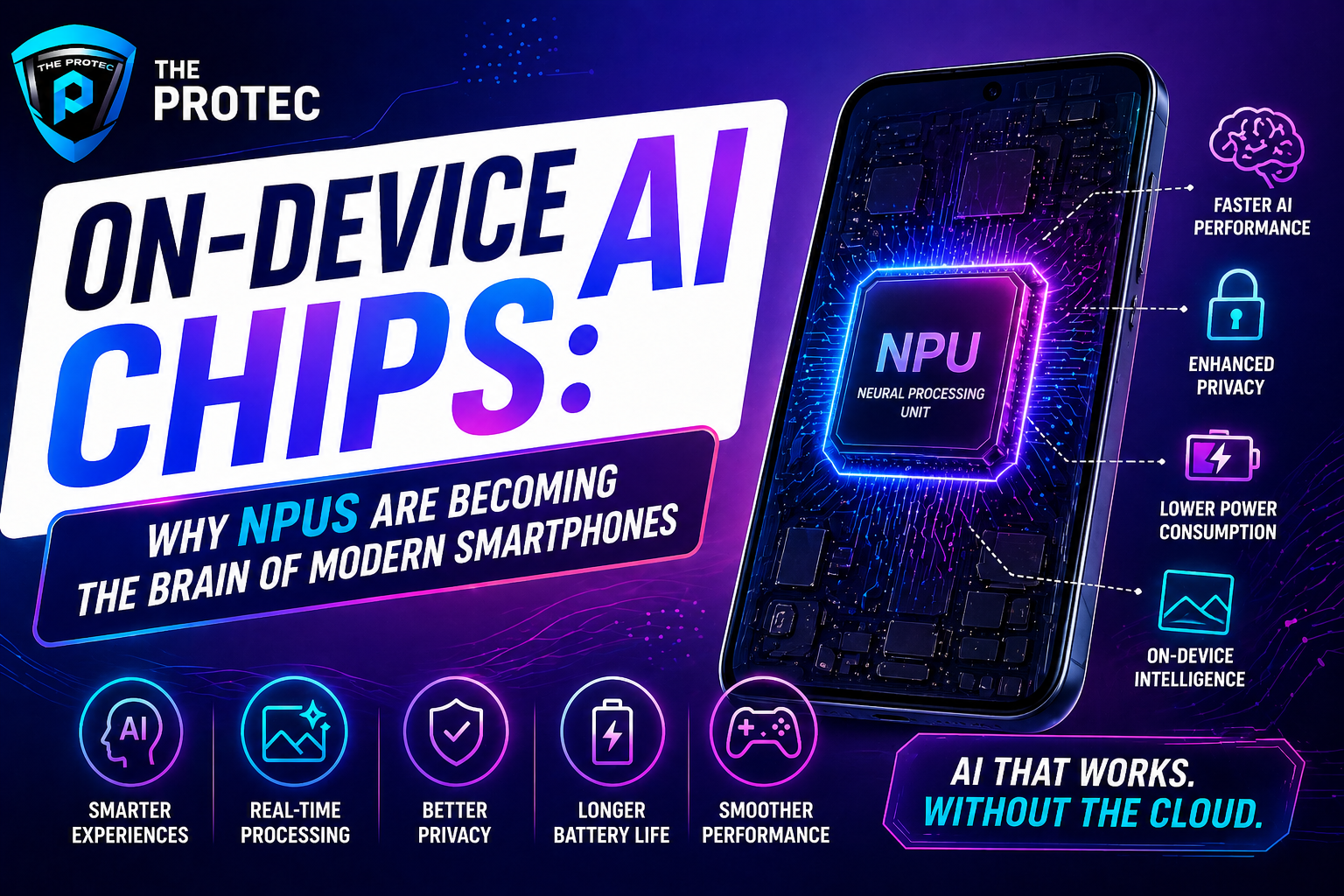

Modern smartphones are no longer mere communication tools; they are powerful computing devices that empower users through advanced AI capabilities. At the heart of this evolution are on-device AI chips, especially Neural Processing Units (NPUs), which are rapidly redefining how mobile devices handle intelligent tasks. Traditionally, many AI functions relied heavily on cloud computing, but recent advancements in mobile AI processors have heralded a new era where smartphones can process complex AI workloads locally in real-time.

This shift towards on-device AI represents a profound change, offering faster performance, improved privacy, and reduced dependency on network connectivity. In this article, we explore how NPUs are replacing cloud AI, what makes on-device AI chips essential for next-generation smartphones, and how these mobile AI processors are shaping the future of mobile technology.

What Are NPUs and On-Device AI Chips?

NPUs, or Neural Processing Units, are specialized processors designed exclusively to accelerate AI and machine learning algorithms. Unlike traditional CPUs and GPUs, which handle a broad range of tasks, NPUs excel at executing neural network operations efficiently and with lower power consumption.

On-device AI chips encompass NPUs and other dedicated AI hardware integrated directly within smartphones or edge devices. These chips enable the phone to execute computationally intensive AI tasks locally rather than relying on cloud servers.

Examples of on-device AI chips include Apple’s Neural Engine, Qualcomm’s Hexagon processors with AI capabilities, and Google’s Tensor Processing Units embedded in Pixel phones. These processors handle varied AI applications from voice assistants and image recognition to augmented reality and health monitoring.

How NPUs Are Replacing Cloud AI in Smartphones

For many years, AI-powered smartphone features depended mainly on cloud computing. When a user spoke to a voice assistant or snapped a photo, the data was often sent to remote servers for processing. Although effective, this setup introduced latency, privacy concerns, and reliance on consistent internet connectivity.

1. Reducing Latency and Boosting Responsiveness

On-device NPUs drastically reduce the time required for AI operations by delivering instantaneous processing. Since data no longer needs to traverse the internet, tasks such as real-time speech recognition, image enhancement, and contextual predictions happen seamlessly, providing near-immediate feedback to users.

2. Enhancing User Privacy and Security

Processing AI data locally means personal information doesn’t have to leave the device, mitigating risks associated with data breaches or unauthorized third-party access. In an age of increasing privacy regulation and user awareness, on-device AI chips foster trust by keeping sensitive data secure.

3. Lowering Dependence on Network Connectivity

Traditional cloud AI solutions falter in low-bandwidth or offline conditions. NPUs empower smartphones to maintain AI functionality even without internet access, which is crucial for users in remote locations or areas with unstable networks.

4. Power Efficiency and Optimization

NPUs are tailored for energy efficiency, often consuming significantly less power than CPUs or GPUs for AI workloads. This efficiency translates into longer battery life while delivering superior AI-driven features.

Real-World Applications of NPUs in Smartphones

The integration of NPUs within smartphones has enabled a variety of innovative features that users increasingly expect:

- Advanced Photography: On-device AI chips power real-time scene recognition, noise reduction, and computational photography techniques, producing sharper, high-quality images under diverse conditions.

- Enhanced Voice Assistants: AI processors allow voice recognition engines to respond faster, understand natural language better, and function offline, ensuring more reliable user interactions.

- Augmented Reality (AR): NPUs accelerate AR rendering and environment mapping, facilitating immersive gaming and utility applications such as navigation or interior design.

- Security Features: AI chips support biometric authentication like facial recognition and fingerprint scanning with improved accuracy and speed.

- Health Monitoring: On-device AI helps analyze sensor data for fitness tracking, heart rate variability, and sleep pattern recognition without sending personal health data to the cloud.

Advancements Driving NPU Innovation

The evolving landscape of NPUs and mobile AI processors is marked by several key technological improvements:

1. Increased Processing Power and Parallelism

Modern NPUs utilize sophisticated architectures optimized for parallel processing of matrix computations—a core component of neural networks. This has significantly boosted their capacity to handle complex models directly on the device.

2. Support for Diverse AI Models

Newer NPUs are not only optimized for traditional convolutional neural networks but also accommodate emerging AI models such as transformers and graph neural networks, broadening the range of AI applications in smartphones.

3. Integration with System-on-Chip (SoC) Designs

Manufacturers increasingly embed NPUs tightly within SoCs alongside CPUs, GPUs, and memory controllers. This integrated approach reduces latency and improves data throughput between components.

4. Enhanced Software Ecosystems

Software development kits (SDKs) and frameworks provided by chipset makers now facilitate easier deployment and optimization of AI models on NPUs, enabling developers to harness their full potential.

The Future Outlook of Mobile AI Processors

Looking ahead, on-device AI chips will continue to evolve as the cornerstone of smartphone intelligence, offering more seamless and personalized experiences. Key trends shaping this future include:

- AI Model Compression and Efficiency: Techniques like quantization and pruning will allow larger AI models to run efficiently on constrained mobile hardware.

- Multi-Modal AI Processing: NPUs will increasingly handle combined inputs such as images, text, and audio simultaneously, enabling richer context understanding.

- Adaptive AI Workloads: Smartphones will dynamically allocate AI tasks between NPUs, CPUs, and GPUs to optimize performance and battery life based on use case.

- Edge AI Collaboration: While NPUs reduce cloud reliance, hybrid approaches will emerge where selective cloud resources augment on-device capabilities for specialized AI tasks.

These advances will make mobile AI processors indispensable for delivering smarter, more intuitive, and secure user experiences.

FAQ

Q1: How do NPUs differ from traditional smartphone processors like CPUs and GPUs?

NPUs are specialized hardware designed specifically to accelerate neural network computations, which are core to AI algorithms. Unlike CPUs that handle general-purpose processing and GPUs optimized for parallel graphics tasks, NPUs operate efficiently at lower power for matrix multiplications and AI inference, offering better speed and energy efficiency for AI workloads.

Q2: Why is on-device AI processing better than cloud-based AI for smartphones?

On-device AI processing reduces latency because data stays on the device, avoids privacy risks associated with transferring user data over networks, works offline without needing constant internet access, and improves power efficiency by processing AI tasks locally. These benefits result in faster, safer, and more reliable AI-powered features.

Q3: Can older smartphones without dedicated NPUs still use AI features effectively?

While older smartphones without NPUs can support AI features, they often rely on less efficient CPU or GPU processing, leading to slower performance and higher battery consumption. The emergence of NPUs enables newer smartphones to offer more advanced, responsive, and power-efficient AI capabilities not feasible on older hardware.

Conclusion

Neural Processing Units and on-device AI chips represent a paradigm shift in smartphone technology. By moving AI workloads from the cloud directly into our hands, these mobile AI processors empower smarter, faster, and more private mobile experiences. As NPUs become increasingly sophisticated, their role as the brain of modern smartphones will only deepen, driving innovation that transcends traditional boundaries and unlocking a future where AI truly enhances every facet of mobile interaction.

For more detailed insights on AI hardware and mobile technology, visit ARM’s AI solutions.