Introduction

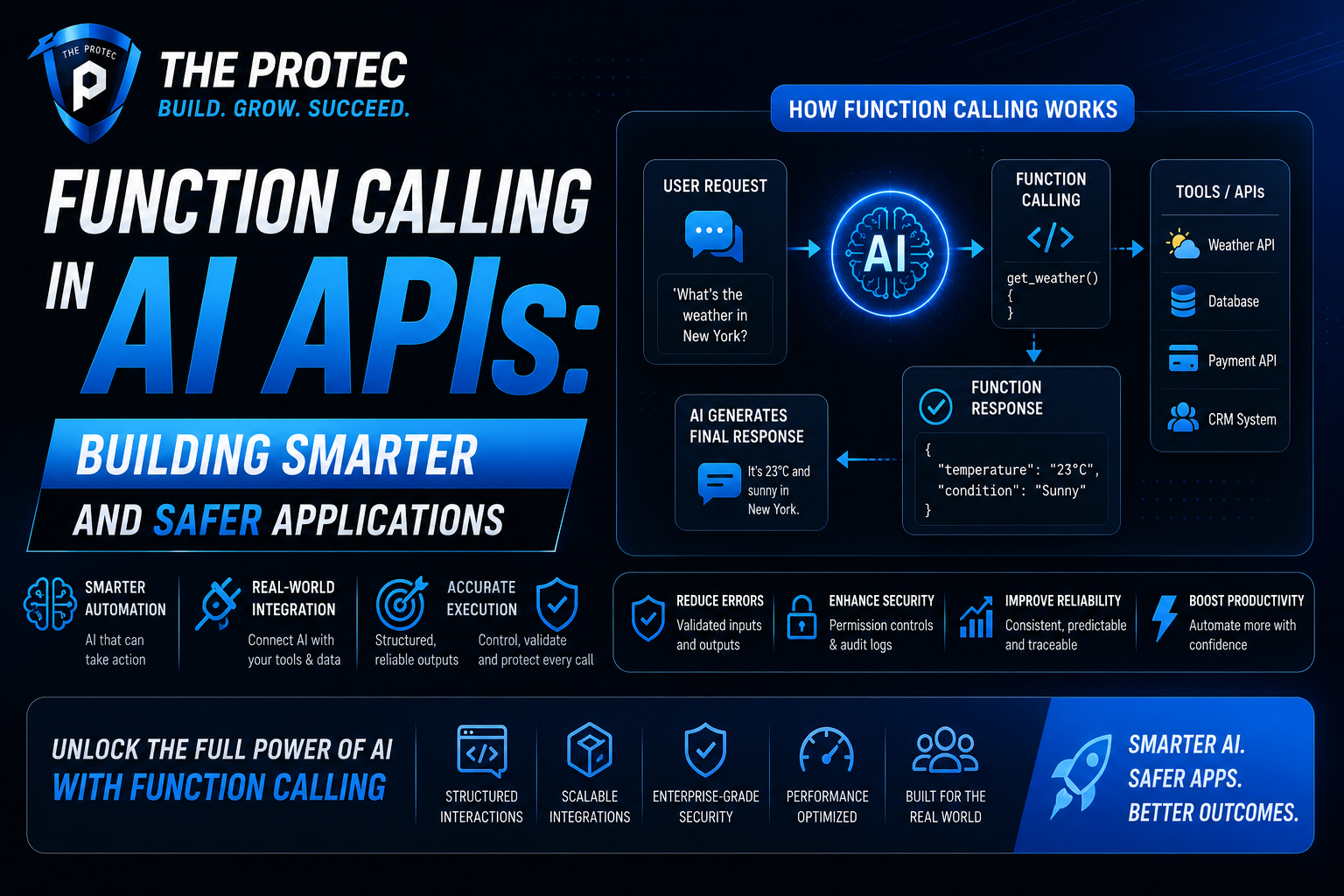

As artificial intelligence technologies rapidly evolve, the sophistication and safety of AI-driven applications depend heavily on how developers interact with AI models. Among the most powerful advancements enhancing this interaction is function calling in AI APIs. This novel approach unlocks new dimensions of control and reliability in AI systems, especially within large language model (LLM) APIs and AI automation APIs. In this article, we explore how function calling transforms AI integration, enabling smarter, more precise, and safer applications.

Understanding Function Calling in AI APIs

Traditionally, AI APIs, particularly those based on large language models, received free-form text prompts and returned text outputs. While remarkably flexible, this method posed challenges regarding the precision and structure of responses. Function calling addresses this by letting the AI explicitly invoke predefined functions during its response generation process.

In this paradigm, developers define specific functions or actions that the AI can call with well-defined parameters. When the AI answers a prompt, it can decide to “call” one of these functions, conveying precise instructions or trigger events backed by structured data. This mechanism shifts the AI’s role from merely generating text to executing actionable tasks within an application context.

Why Function Calling Matters in LLM and AI Automation APIs

With the rise of powerful LLMs and automation platforms, function calling delivers key benefits:

- Increased Control: Applications regain control over outputs by limiting AI responses to specific, predefined functions. This restricts uncontrolled or unexpected text, reducing ambiguity.

- Improved Reliability: Function invocation returns standardized data structures instead of freeform text, making it easier to parse, validate, and process programmatically.

- Enhanced Safety: Fine-tuning which functions an AI can call minimizes risks linked with hallucinations or unsafe outputs, supporting compliance with security protocols.

- Better Integration: Function calling bridges language understanding and backend logic cleanly, facilitating seamless integration with existing APIs and workflows.

- Faster Development: Developers can build robust AI interactions without complex prompt engineering or manual output parsing.

How Function Calling Works in Practice

Consider a customer support chatbot powered by an LLM API with function calling enabled. The developer defines functions like getOrderStatus(orderID) and updateShippingAddress(userID, address). Rather than the model generating vague or conversational text, when a user asks for order status, the AI identifies the action and triggers getOrderStatus with the correct parameters.

The application receives the function call response, executes the corresponding backend logic, and returns accurate, up-to-date information—all while maintaining the natural conversational interface. This architecture reduces errors caused by misinterpretations or inconsistent AI-generated text.

Architecture Patterns Leveraging Function Calling

Function calling enables new design patterns in AI application development:

- API Orchestration Layers: Middleware intercepts AI-generated function calls and routes them to relevant microservices or external APIs, building complex workflows.

- Safety and Compliance Gateways: Systems enforce policies by validating function calls before execution, ensuring AI actions remain within prescribed boundaries.

- Event-Driven Automation: AI triggers event-based functions that automatically respond to user requests or environmental changes, streamlining operations.

Real-World Use Cases Enhancing Reliability and Control

1. Dynamic Document Processing

In document processing, AI models extract and structure information. Function calling allows direct invocation of extraction functions like extractInvoiceData(pdfFile), which outputs structured JSON instead of ambiguous text fields. This reliability accelerates data validation and integration into ERP systems.

2. Intelligent Virtual Assistants

Virtual assistants employ AI function calling to perform validated user actions such as scheduling meetings or fetching data securely. By invoking authenticated backend functions, the AI maintains control that prevents unauthorized or erroneous operations.

3. Automated DevOps Workflows

LLMs integrated with automation APIs can call functions to deploy code, restart servers, or monitor logs based on natural language commands. By relying on structured function calls, DevOps systems reduce the chance of dangerous commands and improve traceability.

Challenges and Best Practices

While function calling unlocks tremendous benefits, it introduces design considerations:

- Function Definition Granularity: Too few functions limit flexibility; too many increase complexity. Balance is key.

- Parameter Validation: API inputs require strict validation to prevent injection attacks or malformed inputs.

- Error Handling: Systems must gracefully handle failed function calls or incorrect AI decisions.

- Security: Access control and auditing of functions invoked by AI are critical for safety.

Developers should carefully design function interfaces, maintain thorough documentation, and incorporate monitoring mechanisms to maximize reliability and control.

Future Trends in AI Function Calling

The momentum behind AI function calling continues to grow, with evolving trends including:

- Standardized Function Schemas: Open standards for defining callable functions across AI APIs will improve portability and interoperability.

- Context-Aware Invocation: Enhanced models will assess broader context to dynamically select or compose function calls for complex tasks.

- Secure Enclaves for Execution: Running AI-triggered functions in secure environments will become a norm for sensitive applications.

- End-to-End Automation: AI-driven pipelines may fully orchestrate multi-step workflows by chaining numerous function calls.

FAQ: Function Calling in AI APIs

What is the key benefit of using function calling with LLM APIs?

Function calling transforms AI responses from freeform text into actionable function invocations with structured outputs. This enhances control, reduces ambiguity, and enables smoother integration with backend systems, ultimately improving reliability.

How does function calling improve safety in AI applications?

By restricting AI outputs to predefined function calls with validated parameters, developers limit the risk of unpredictable or dangerous outputs, making it easier to enforce security policies and monitor AI behavior.

Can function calling be used with automation APIs beyond chatbots?

Absolutely. Function calling is well-suited for a wide range of AI automation APIs, including intelligent workflows, document processing, DevOps automation, and more where reliable, precise function invocation is critical.

Conclusion

Function calling in AI APIs marks a pivotal step toward building smarter, safer, and more reliable AI applications. By enabling large language models and automation APIs to invoke structured functions directly, developers regain control over AI behavior and output, reducing ambiguity and elevating trustworthiness. As AI continues to permeate diverse industries, embracing function calling will be essential for unlocking the full potential of intelligent automation while mitigating risks. For cutting-edge AI integrations, understanding and applying function calling principles offers a clear path to creating better, more controllable AI-powered solutions.

To explore more on advancing AI-driven applications with function calling, visit the OpenAI Function Calling overview.