Introduction

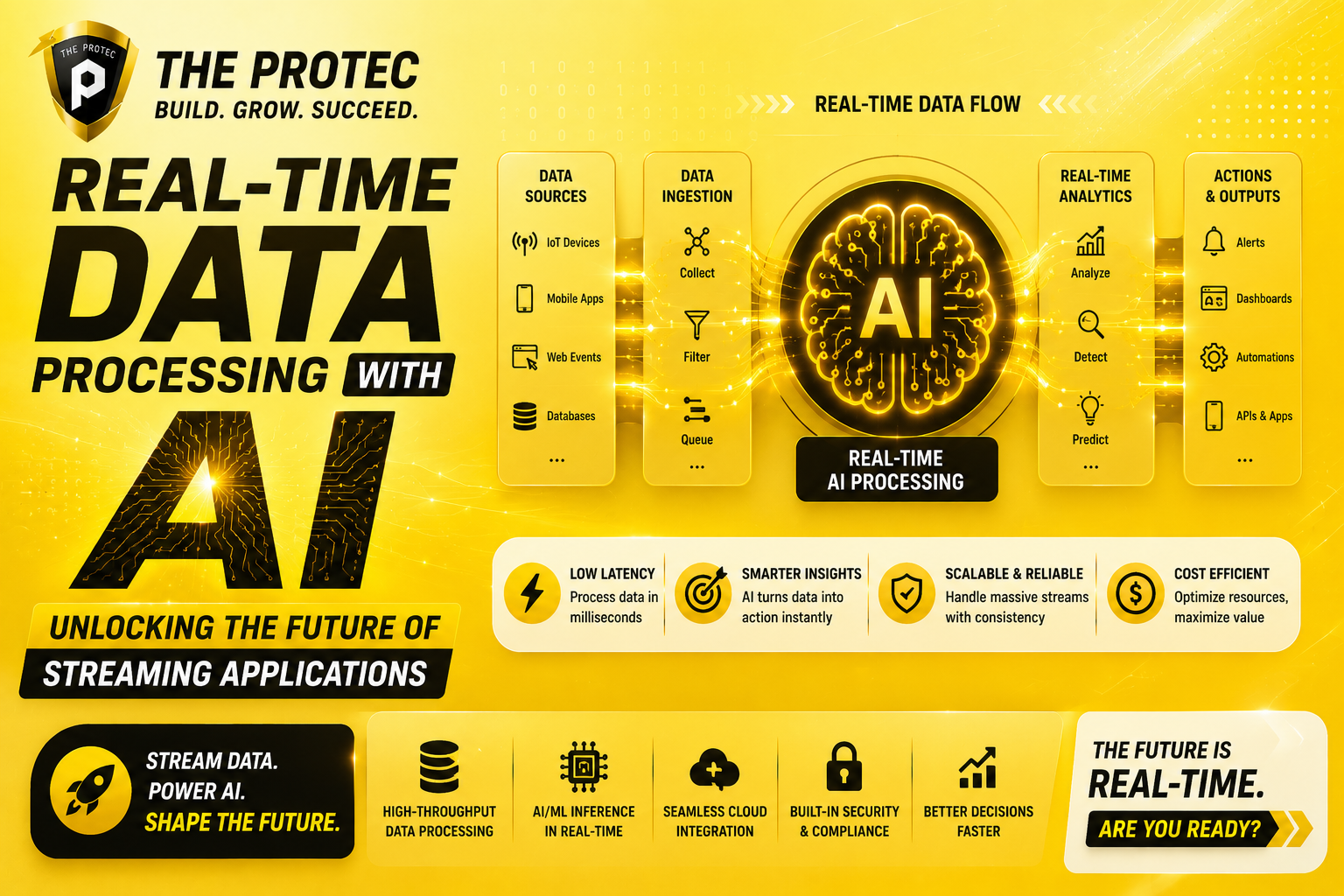

In an age where data perpetually floods digital environments, real-time responsiveness and intelligence have become paramount. Streaming applications powered by real-time AI processing are redefining the way businesses interact with data, enabling instant insights and adaptive decision-making at unprecedented scales. This leap forward hinges on sophisticated architectures integrating streaming data AI and advanced data pipelines AI to process, analyze, and act on information the moment it is generated.

This article delves into the architecture underpinning these AI-driven real-time systems, exploring how current technologies converge to streamline data flow and optimize processing. We dissect the core components, challenges, and evolving trends in building real-time AI-powered streaming applications that meet modern demands.

Understanding Real-Time AI Processing

Real-time AI processing refers to the immediate analysis and interpretation of streaming data using artificial intelligence algorithms. Unlike batch processing, which handles data in fixed periods, real-time AI continuously ingests and processes data, delivering dynamic outcomes without noticeable delay. This capability is vital for use cases requiring instantaneous feedback such as fraud detection, recommendation engines, autonomous vehicles, or live content moderation.

Streaming data AI plays a central role in this paradigm, harnessing the steady flow of information from disparate sources (e.g., sensors, social media feeds, financial transactions) and applying machine learning models, natural language processing, or computer vision in real time. This continuous analytics framework enables systems to adapt quickly to evolving circumstances without human intervention.

Core Architectural Components of Real-Time AI-Powered Streaming Systems

Building an effective real-time data processing system empowered by AI requires a robust, scalable architecture. The key components include:

1. Data Ingestion Layer

The initial stage captures streaming data from diverse sources. Technologies such as Apache Kafka, AWS Kinesis, or Google Cloud Pub/Sub provide highly scalable and resilient message queues that can handle millions of events per second. This layer ensures low-latency, fault-tolerant collection of data, crucial for maintaining real-time guarantees in downstream tasks.

2. Stream Processing Engine

Once ingested, data flows into a streaming engine capable of performing transformations, filtering, and aggregations in motion. Frameworks like Apache Flink, Apache Spark Structured Streaming, and Apache Beam allow the execution of stateful, distributed computations on streams. Integrating AI at this stage means embedding machine learning models within these processing pipelines to score, classify, or predict outcomes on live data.

3. Real-Time AI Inference Layer

A dedicated inference layer hosts optimized AI models capable of rapid evaluation, leveraging hardware accelerators such as GPUs or TPUs for performance. Deploying AI models as microservices or via serverless endpoints helps to scale inference elastically with data velocity. These models are trained offline but need continuous retraining and updating to adapt to concept drifts, which is another critical architectural consideration.

4. Data Storage and State Management

Stateful streaming operations and AI inference processes often depend on managing historic or contextual information. Technologies such as Apache Kafka Streams state stores, RocksDB, or cloud-native stateful databases maintain transient or persistent state to support complex event processing and enhance AI decisioning accuracy.

5. Monitoring, Logging, and Feedback Mechanisms

Operationalizing real-time AI processing requires real-time monitoring and logging. Observability platforms track latency, throughput, model accuracy, and anomaly detection to detect degradation or faults. Feedback loops are essential to trigger retraining workflows or recalibrate data pipelines dynamically.

How Data Pipelines AI Shapes Real-Time Systems

The concept of data pipelines AI integrates AI technologies directly into the data flow, not merely as separate analytic tools but as intrinsic components that inform and influence every stage of the data lifecycle. This fusion enables more intelligent routing, data enrichment, anomaly detection, and error correction on the fly.

Modern data pipelines with AI capabilities often feature automated feature extraction, adaptive sampling (to focus on relevant data subsets), and real-time metadata generation. These pipelines also support complex event processing (CEP), enabling pattern detection and immediate reaction within streaming contexts.

Implementing AI-Enhanced Data Pipelines

- Automated Preprocessing: AI models can identify and correct data quality issues immediately after ingestion.

- Adaptive Workload Management: Machine learning algorithms predict processing bottlenecks and optimize resource allocation across the pipeline.

- Dynamic Model Selection: Pipelines dynamically switch AI models based on real-time performance metrics or incoming data characteristics.

Challenges and Solutions in Real-Time AI Streaming Architectures

Low Latency vs Model Complexity

Real-time systems demand low latency, yet advanced AI models (like deep neural networks) often require considerable processing time. Balancing this tradeoff necessitates model optimization techniques, including quantization, pruning, or employing lightweight architectures such as TinyML models.

Handling Data Drift and Model Degradation

The data stream patterns may shift, affecting model accuracy. Incorporating continuous monitoring and automated retraining pipelines is crucial to maintain AI effectiveness and responsiveness.

Scalability and Fault Tolerance

To sustain high throughput, architectures must be horizontally scalable and resilient to failures, employing container orchestration (e.g., Kubernetes) and state checkpointing mechanisms.

Security and Privacy

Streaming applications often process sensitive data. Techniques such as federated learning and differential privacy are increasingly integrated to safeguard data within real-time AI pipelines.

Emerging Trends in Real-Time AI Processing for Streaming Applications

As technology evolves, new approaches are emerging to enhance real-time AI processing:

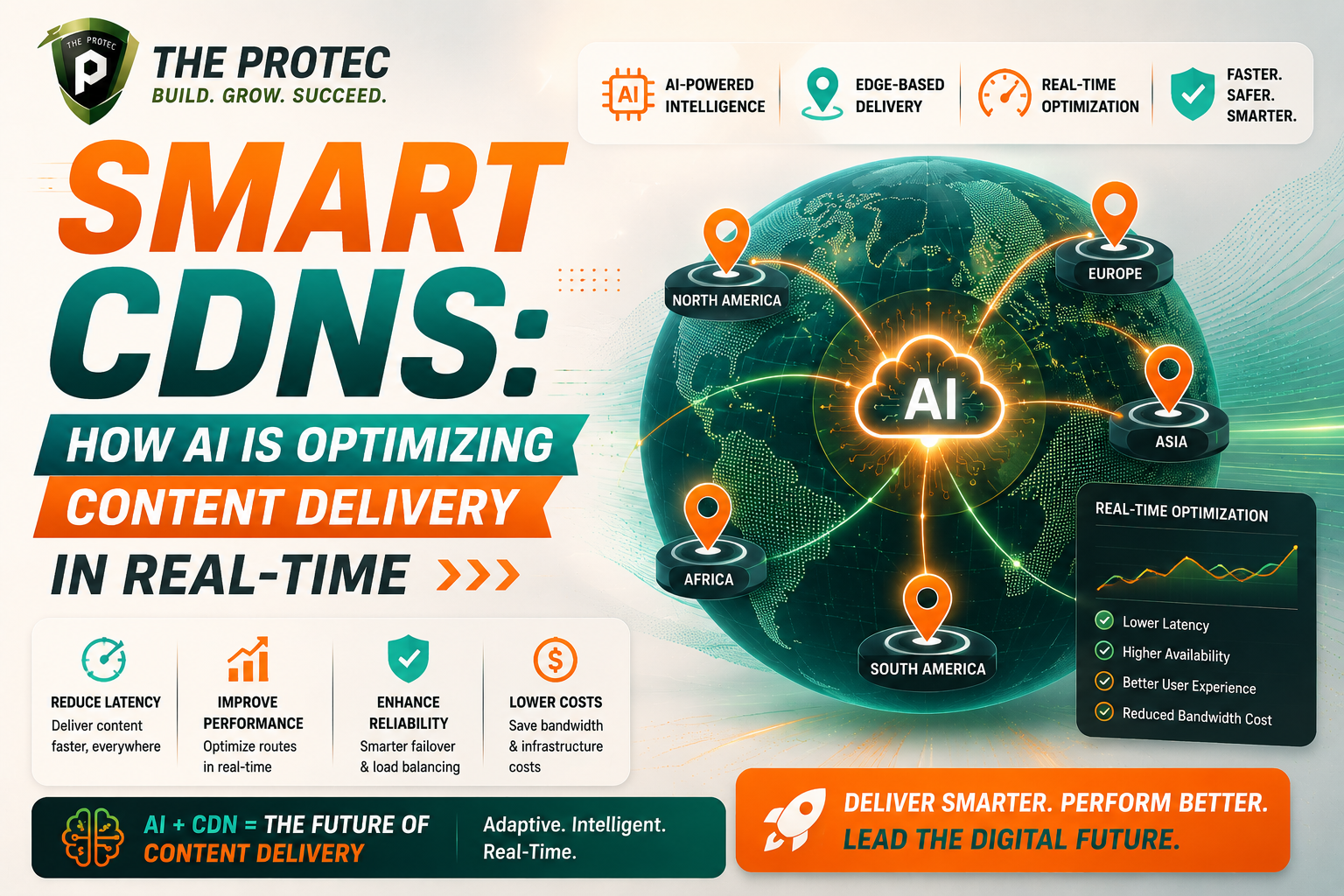

- Edge AI Integration: Running AI inference closer to data sources reduces latency drastically in IoT and mobile use cases, supporting decentralized streaming architectures.

- AI-Driven Orchestration: Leveraging AI to autonomously manage resource allocation, error recovery, and workflow optimization in real time.

- Multi-Modal Streaming AI: Processing and correlating diverse data types (video, text, sensor data) concurrently for richer insights.

- Explainable AI (XAI) in Streaming: Integrating interpretability tools within real-time AI models to provide transparency for critical applications.

Practical Applications Powered by Real-Time AI Streaming

Organizations across industries are unlocking value using real-time AI streaming systems:

- Financial Services: Fraud detection systems analyze transaction streams instantly to flag suspicious activities.

- Telecommunications: Network anomaly detection and adaptive bandwidth management improve user experience dynamically.

- Healthcare: Continuous patient monitoring systems alert professionals in real time upon detecting critical events.

- Entertainment: Video streaming platforms deliver personalized recommendations instantly based on live user interaction.

FAQ

What distinguishes real-time AI processing from traditional batch AI?

Real-time AI processing handles live data streams continuously with minimal latency, enabling instant decision-making and adaptive responses. Traditional batch AI processes data in larger, scheduled chunks, which introduces delays unsuitable for time-critical applications.

How do AI models stay accurate in streaming data environments where data patterns constantly change?

Streaming AI systems deploy monitoring and feedback loops to detect data drift and model degradation. Automated retraining pipelines update models with recent data, maintaining accuracy and relevance.

Which technologies are recommended for building scalable real-time AI pipelines?

Apache Kafka or AWS Kinesis serve as ingestion platforms; Apache Flink or Spark Structured Streaming perform stream processing. For AI inference, containerized microservices using TensorFlow Serving or ONNX Runtime optimized with GPUs deliver best results. Cloud-native monitoring tools ensure observability.

Conclusion

The synthesis of real-time AI processing and streaming data architectures is crafting the future of intelligent applications. By designing thoughtful, scalable pipelines that integrate AI seamlessly, businesses can capitalize on the relentless flow of information to derive timely insights and automate critical decisions. Understanding the architecture and embracing emerging trends unlock a new realm of possibilities where data becomes a live asset, continuously propelling innovation and competitive advantage.

For those eager to dive deeper into real-time stream processing and AI integration, resources like Apache Flink and Apache Kafka provide extensive documentation and case studies showcasing the power of these technologies.