Contents

Introduction

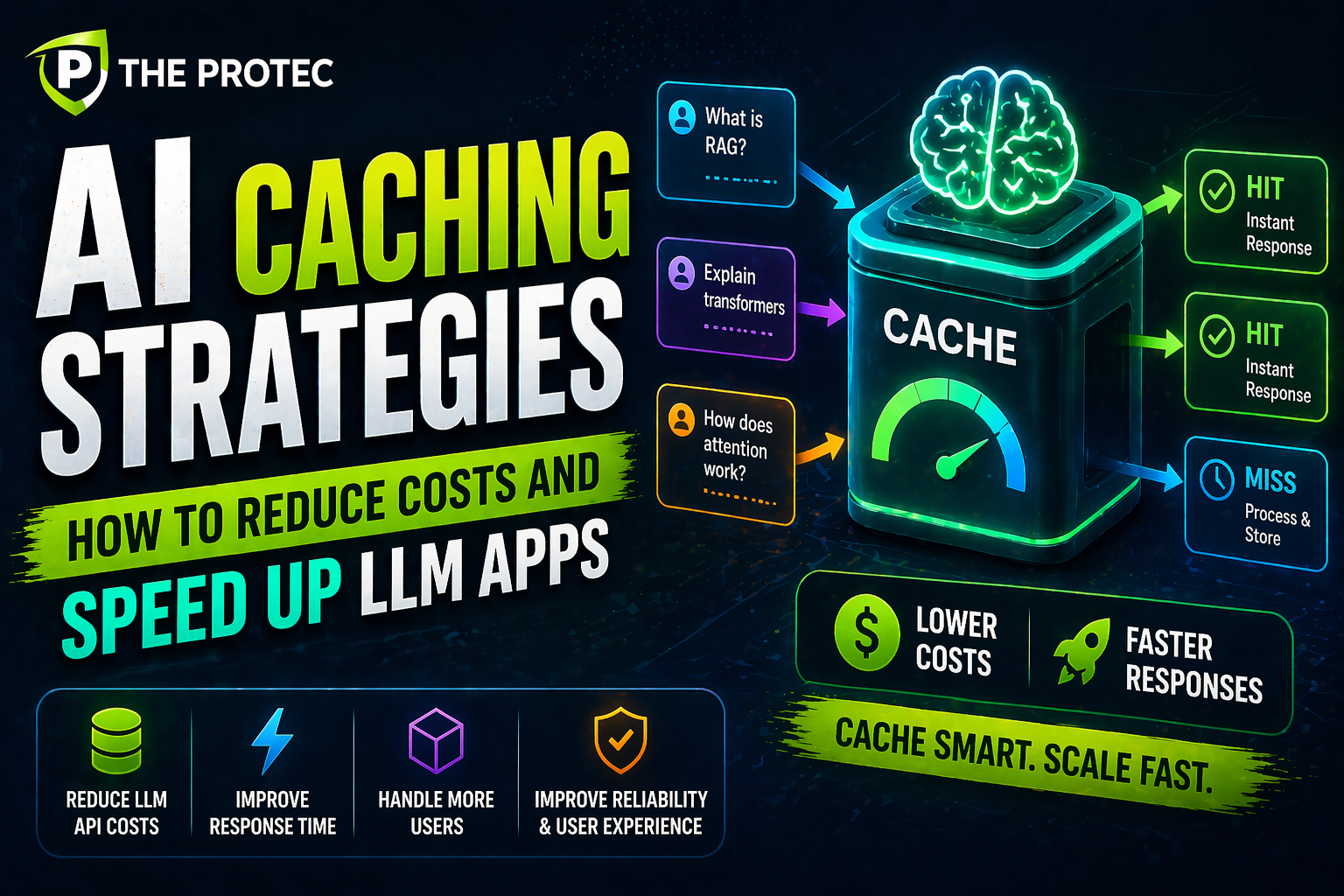

As large language models (LLMs) become integral to numerous AI-driven applications, managing their operational costs and performance has become a critical concern. These models often require substantial compute resources, leading to high latency and escalating expenses, especially when deployed at scale. Enter AI caching a set of strategies designed to optimize resource consumption by intelligently reusing prior responses. This approach can dramatically reduce inference latency and operational costs while preserving the quality and freshness of outputs.

In this article, we dive deep into AI caching strategies with a focus on token caching and semantic caching. We’ll explore how these methods work under the hood, their impact on real-world systems, and the tangible performance gains they deliver in LLM applications today.

Understanding AI Caching in LLMs

AI caching refers to storing and reusing outputs from language models to avoid redundant computations on similar or identical inputs. Unlike traditional caching in web development, AI caching must contend with the probabilistic nature of LLMs, their token-by-token generation, and contextual dependencies.

Implementing effective AI caching strategies involves balancing between response freshness, cache hit rates, and computational savings. Two popular approaches have emerged: token caching and semantic caching. Both aim to reduce redundant token generation but approach the problem from different angles.

Token Caching: Reusing Generated Tokens to Save Compute

What Is Token Caching?

Token caching involves saving previously generated tokens during an LLM’s output sequence. Since LLMs typically generate text one token at a time, token caching stores these tokens to avoid recalculating them in future sessions when the same or extended prompt context appears.

For example, if your application frequently processes similar prompts or conversations, tokens generated in the initial stage can be cached. When the model receives a prompt that shares a prefix with prior inputs, it can skip recomputation of the cached tokens and directly continue generation from that point.

How Token Caching Works Technically

- Prompt Prefix Matching: The system searches the cache for tokens previously generated from identical prompt prefixes.

- State Reuse: Modern transformer models maintain internal key-value states through the attention mechanism. Token caching enables reusing these cached states, drastically reducing transformer execution for repeated tokens.

- Incremental Generation: The LLM only needs to generate new tokens starting from the cached point rather than processing the entire prompt again.

Benefits of Token Caching

- Lower Latency: By avoiding recomputation, token caching accelerates response times significantly.

- Cost Savings: The reduced number of transformer computations means less GPU utilization and lower inference costs.

- Scalability: Applications serving many similar requests chatbots, search engines, document summarizers benefit most.

Semantic Caching: Capturing Meaning Beyond Tokens

What Is Semantic Caching?

Semantic caching moves beyond exact token reuse and focuses on caching outputs anchored to semantically similar inputs. By leveraging embeddings and vector similarity techniques, semantic caching enables reuse of model responses for queries that are close in meaning, even if phrased differently.

This approach is particularly effective in large-scale applications where users submit natural-language queries with variations, yet seek similar answers. Semantic caching can return cached results for near-duplicate or related queries without full regeneration.

Implementing Semantic Caching

- Embedding Generation: Convert incoming queries into fixed-length vectors representing their semantics using an embedding model.

- Similarity Search: Use vector databases or approximate nearest neighbor (ANN) algorithms to find cached responses with embeddings near the current query.

- Thresholding: Define similarity thresholds ensuring cached responses serve only sufficiently similar queries to maintain relevance.

- Cache Update Policy: Incorporate mechanisms to refresh cache entries, especially for dynamic data or evolving user intents.

Advantages of Semantic Caching

- Higher Cache Hit Rates: Captures more reuse opportunities than strict token matching.

- Improved User Experience: Delivers faster responses for diverse user queries.

- Cost Efficiency: Reduces redundant model runs across semantic clusters of input.

Real-World Performance Gains and Applications

To understand the benefits of AI caching strategies, consider these real-world applications:

Conversational AI and Chatbots

Chatbots often receive repeated or similar queries from users. Token caching in this context avoids reprocessing conversational histories, yielding faster turnarounds. Semantic caching further enhances responsiveness by serving cached answers for analogous questions.

Document Search and Summarization

Applications delivering summaries or search results over large documentation sets benefit by caching query outputs. Semantic caching guides approximate matches, significantly lowering the number of full inference calls, especially when queries overlap in intent.

Cost Reduction Metrics

- Inference Cost Savings: Case studies reveal up to 40-60% reduction in API calls or GPU inference time with effective caching.

- Latency Improvements: Response times dropped by 30-50% in latency-sensitive production environments.

- Resource Optimization: Enables serving more users on the same infrastructure budget.

Best Practices for Implementing AI Caching

- Monitor Cache Hit Rates: Track usage to fine-tune caching thresholds and eviction policies.

- Combine Strategies: Employ hybrid approaches that use token caching for exact matches and semantic caching for similar queries.

- Cache Security: Ensure sensitive user data is handled securely when storing cached responses.

- Versioning: Account for model updates by invalidating or refreshing cached data.

- Cache Size Management: Use metrics-driven policies to evict stale or infrequent entries.

Frequently Asked Questions

How does token caching differ from semantic caching?

Token caching strictly reuses previously generated token sequences for identical prompt prefixes, leading to exact reuse. Semantic caching leverages meaning similarity across different phrasing, using embedding-based search to provide approximate reuse of model outputs.

Can AI caching compromise the freshness of responses?

If not carefully managed, caching can serve outdated or less relevant results. Implementing refresh policies, version control, and similarity thresholds helps maintain response accuracy and freshness while still gaining efficiency.

Is semantic caching computationally expensive?

While semantic caching involves embedding storage and similarity searches, advances in vector databases and approximate nearest neighbor algorithms make these lookups efficient. When balanced against GPU inference costs, semantic caching is usually computationally advantageous.

Conclusion

AI caching strategies like token caching and semantic caching are pivotal in the optimization of LLM-powered applications. They reduce the cost and latency hurdles of large-scale deployments, enabling faster, more cost-effective, and scalable AI experiences. By understanding when and how to implement these caching methods, developers and engineers can unlock significant performance gains and deliver superior AI-driven interactions.

For further insights into optimizing AI systems and best practices, consider exploring resources from leading AI infrastructure providers such as TensorFlow Serving and Pinecone Vector Database.