Introduction

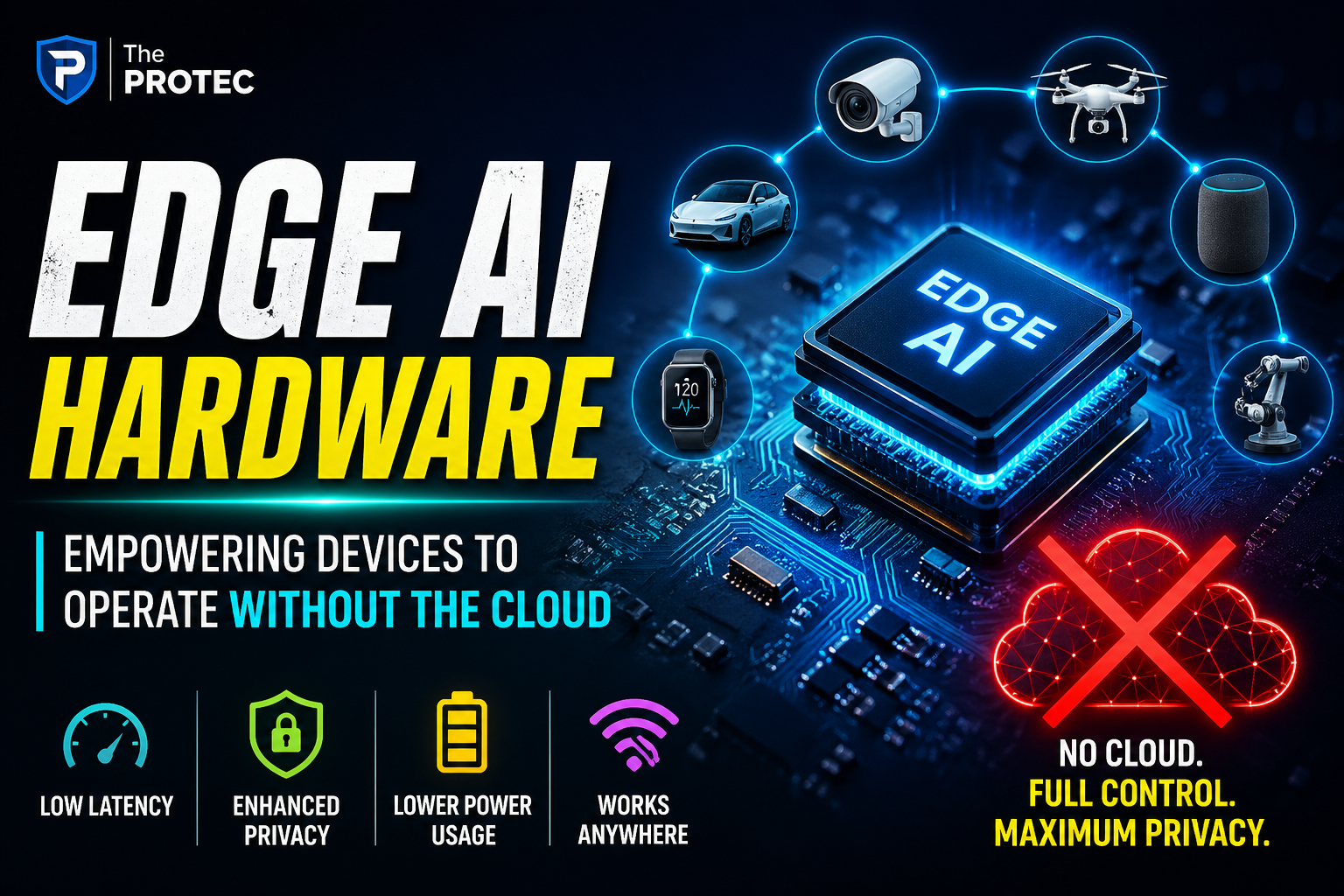

As artificial intelligence continues to transform industries, its integration into everyday devices is reshaping how we interact with technology. Traditionally, many AI-powered applications rely heavily on cloud computing, sending data to remote servers for processing. However, a growing trend in AI hardware development is pushing intelligence closer to the user directly onto devices themselves. This evolution, known as edge AI, allows devices to perform complex computations locally, independent of cloud infrastructure.

Edge AI hardware enables offline AI processing, which delivers significant advantages including improved data privacy, reduced latency, and decreased reliance on continuous internet connectivity. With advancements in specialized chips and system designs, this shift is driving the future of AI-powered devices across sectors from healthcare to automotive, smart homes, and industrial automation.

What Is Edge AI Hardware?

Edge AI hardware refers to the specialized semiconductor components and computing architectures built to run artificial intelligence algorithms directly on edge devices such as smartphones, IoT gadgets, security cameras, wearables, and autonomous robots. Unlike centralized cloud AI models that process data remotely, edge AI hardware embeds processors optimized for machine learning workloads close to the data source.

Key types of AI chips powering edge devices include:

- Neural processing units (NPUs): Dedicated processors specialized for neural network computations.

- Tensor processing units (TPUs): High-efficiency AI accelerators tailored for tensor operations.

- Field-programmable gate arrays (FPGAs): Flexible hardware that can be reprogrammed for efficient AI tasks.

- System on Chips (SoCs): Integrated platforms combining CPUs, GPUs, and AI accelerators optimized for edge AI.

These components are increasingly incorporated into devices to allow them to handle AI inference on-site, ensuring real-time data analysis without continuous cloud interaction.

The Benefits of Offline AI Processing on Edge Devices

1. Enhanced Privacy and Security

One of the foremost advantages of offline AI processing is improved data privacy. Since sensitive information doesn’t need to be transmitted over the internet or stored on external servers, risks related to data interception, breaches, or unauthorized access diminish significantly. For industries dealing with highly confidential data such as healthcare, finance, or personal user information, keeping AI processing local adds a critical layer of protection.

Devices equipped with edge AI hardware can analyze biometric details, voice commands, or behavioral patterns entirely on-device. This local processing means users retain ownership of their data and reduce dependency on cloud policies or vulnerabilities.

2. Decreased Latency and Faster Responses

AI workloads generally involve complex computations, which can create latency if routed to the cloud, especially in environments with unreliable or slow internet connections. Edge AI hardware reduces this delay by handling inference at the source, enabling instant decision-making and interaction.

Fast response times are essential in scenarios like autonomous vehicles avoiding hazards, industrial machines detecting faults, or smart assistants responding promptly to commands. This ultra-low latency directly increases safety, user satisfaction, and operational efficiency.

3. Reduced Cloud Dependency and Bandwidth Usage

Cloud infrastructure incurs costs and relies on stable network connectivity. By processing AI tasks locally, devices minimize their dependence on cloud services, reducing operational costs associated with continuous data transfer and cloud computing resources.

This also alleviates network congestion and bandwidth consumption, crucial for remote locations or large-scale IoT deployments. Offline AI processing allows edge devices to operate reliably even with intermittent or no internet access, enhancing overall system resilience.

4. Energy Efficiency and Extended Device Operation

Modern edge AI hardware is designed not only for performance but for energy-conscious operation. Specialized AI accelerators consume less power than sending data back and forth to the cloud, which is significant for battery-powered devices like wearables or mobile gadgets.

Efficient AI hardware supports longer autonomy between charges, making edge AI devices more practical and convenient without sacrificing intelligent capabilities.

Latest AI Hardware Trends Empowering Edge Computing

Miniaturized, High-Performance AI Chips

Silicon manufacturers are pushing the boundaries with smaller, more powerful AI chips. Advances in semiconductor technology, such as 3nm processes and novel materials, enable compact chips that deliver outstanding machine learning performance while fitting into sleek devices.

For instance, leading companies have released next-generation NPUs and AI SoCs optimized for image recognition, natural language understanding, and sensor fusion tailored specifically for edge use cases.

Specialized Architectures for Real-Time Inference

Edge AI hardware increasingly adopts heterogenous computing architectures combining CPUs, GPUs, and AI cores that can handle diverse machine learning models efficiently. Architectures focusing on parallelism and low-precision arithmetic allow real-time inferencing without compromising accuracy.

Real-time capabilities are vital for time-sensitive applications such as robotics, augmented reality, and security monitoring.

Integration with 5G and Advanced Sensors

The interplay between edge AI hardware and 5G networks is accelerating device intelligence by supporting high-throughput data exchange when connectivity is available, while preserving the ability for offline processing.

Advanced sensors including LiDAR, thermal cameras, and bio-sensors integrated with edge AI chips provide richer context awareness for smart systems, increasing autonomy and reliability.

Applications Driving the Shift to Edge AI Hardware

Smart Home Devices

From smart speakers to security systems, integrating edge AI hardware allows smart home gadgets to perform speech recognition and video analytics locally, ensuring user privacy and responsive control even during internet outages.

Healthcare Wearables

Wearable devices equipped with local AI can continuously monitor vital signs and detect anomalies in real time without risking sensitive health data exposure by cloud uploads.

Autonomous Vehicles and Drones

Self-driving cars and delivery drones utilize edge AI to make split-second navigation decisions and obstacle detection without relying on central servers, critical for safety and autonomy.

Industrial Automation and Predictive Maintenance

Factories increasingly deploy edge AI-enabled robots and sensors to monitor equipment health and optimize production flows. Offline processing reduces latency and prevents disruptions caused by connectivity loss.

Challenges Faced by Edge AI Hardware Development

Despite tremendous progress, edge AI hardware designers face challenges such as balancing computational power with thermal limits and miniaturization constraints. Additionally, creating AI models optimized for on-device deployment requires expertise in model compression and pruning techniques without sacrificing accuracy.

Security concerns also persist in protecting edge devices themselves from physical tampering or firmware attacks since these devices are widely distributed and may lack robust physical security.

Future Outlook

The momentum towards edge AI hardware will continue to accelerate as demands for privacy, instant decision-making, and autonomy grow. Emerging trends such as neuromorphic computing and analog AI chips promise even greater efficiency gains. Developers and manufacturers focusing on tight hardware-software co-design will unlock new capabilities for intelligent devices operating completely independently of the cloud.

Frequently Asked Questions (FAQ)

What distinguishes edge AI devices from traditional AI systems?

Edge AI devices perform artificial intelligence computations locally on the device rather than relying on cloud servers. This local processing improves privacy, reduces latency, and enables offline usage, unlike traditional AI systems that require constant cloud communication.

How does offline AI processing contribute to user privacy?

By keeping data processing on the device itself, offline AI processing prevents sensitive information from being transmitted to external servers, minimizing exposure to hacking or unauthorized access and giving users more control over their data.

Are edge AI devices capable of complex AI tasks compared to cloud-based systems?

While edge AI devices are increasingly powerful due to advances in dedicated AI hardware, they generally perform optimized inference tasks rather than large-scale training or deep neural network development, which still often depend on cloud infrastructure.

Conclusion

Edge AI hardware is revolutionizing the way devices handle intelligence by enabling powerful offline AI processing that champions privacy, speed, and independence from the cloud. As AI hardware trends continue evolving, expect a new generation of smart, autonomous devices that operate seamlessly under all network conditions, reshaping industries and user experiences alike.