Introduction

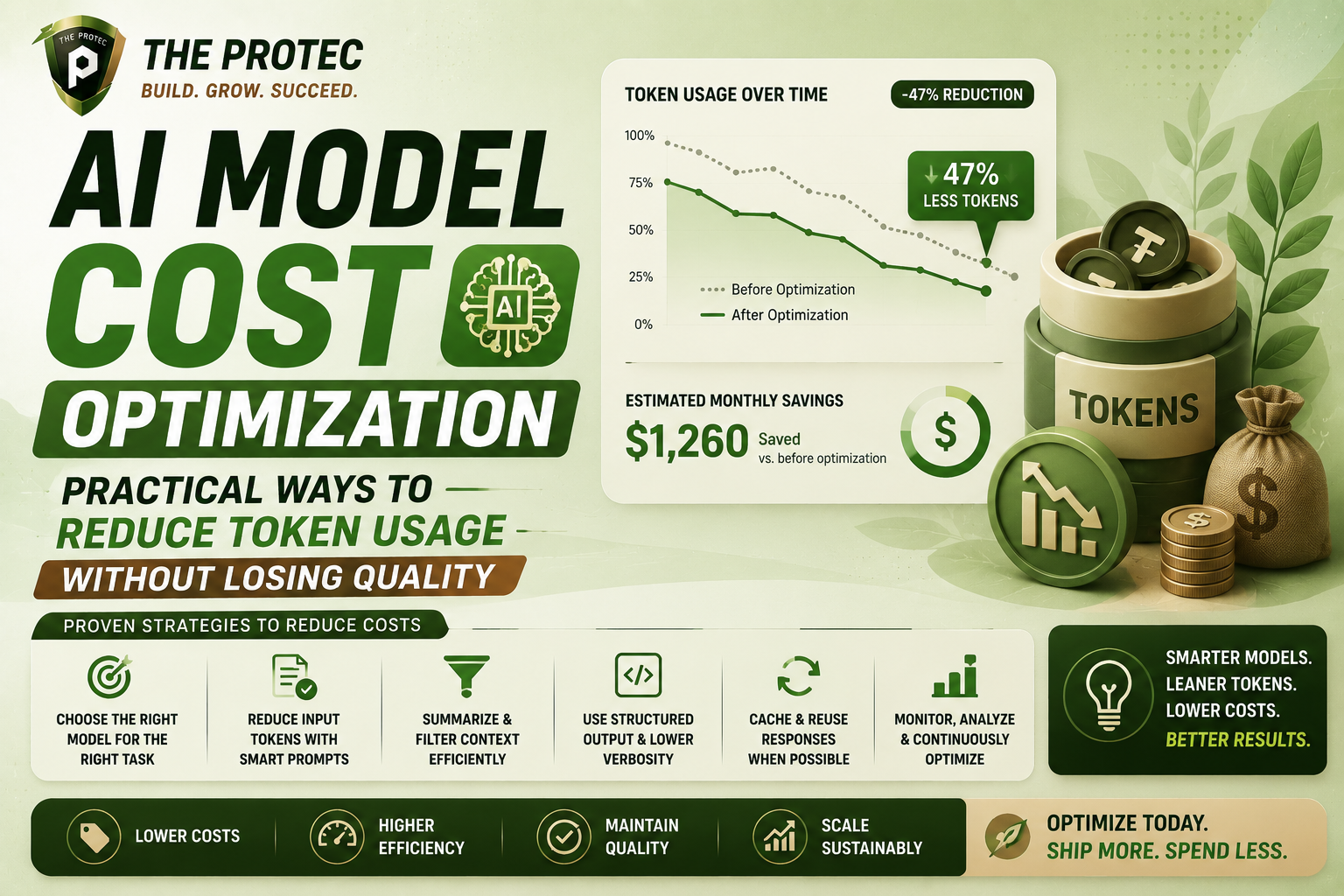

As large language models (LLMs) become integral to AI-driven applications, operational costs can quickly escalate due to the per-token pricing model common in most APIs. Managing these costs without sacrificing output quality is a critical challenge for businesses and developers alike. This article dives deep into practical, actionable strategies for AI cost optimization by reducing token usage effectively, ensuring you maximize every dollar spent on your AI models.

Understanding Token Usage and Cost Drivers in AI Models

Before exploring optimization tactics, it’s essential to grasp how token usage impacts your overall expenses. Language models like GPT variants charge based on the number of tokens processed, including both input and output. Tokens are pieces of words or characters, and a higher token count translates directly into higher costs.

Token usage depends heavily on input prompt length, output length, and the complexity of the task. Thus, cutting unnecessary tokens while preserving context is the foundation for lowering API bills.

1. Optimize Prompt Design for Efficient Token Usage

One of the most impactful levers to reduce token consumption lies in crafting concise, strategic prompts:

- Eliminate Redundancies: Review prompts to remove repetitive or non-essential text. Precision in language reduces tokens without compromising clarity.

- Use System-Level Instructions: Adopt system or preamble prompts to set behavior, so you avoid repeating context in every call.

- Employ Template-Based Inputs: Reusing structured templates where only variable fields change helps standardize token counts.

- Leverage Few-Shot Examples Sparingly: While providing examples can guide responses, excessive examples increase token costs. Use representative, minimal examples.

By sharpening prompt content, developers can significantly trim token consumption per request.

2. Control Output Length Without Sacrificing Quality

Output tokens directly influence costs, so managing response lengths is key:

- Set Token Limits Thoughtfully: Configure maximum token parameters to balance brevity and completeness.

- Adjust Temperature & Top-P For Precision: Lowering temperature makes outputs more deterministic and focused, often reducing unnecessary verbosity.

- Post-Processing Compression: Use summarization or text simplification techniques on outputs to reduce length while retaining meaning.

These adjustments help keep model responses tight and cost-efficient without degrading user experience.

3. Leverage Model Selection and Customization

Not all AI models come with the same cost-quality profile. Choosing the right model and customizing it can save tokens:

- Select Smaller Models for Simpler Tasks: Reserve large models for complex scenarios; smaller models handle straightforward queries with fewer tokens.

- Fine-Tune Models: Fine-tuning on domain-specific data can improve output relevance, reducing the need for verbose prompts or clarifying outputs.

- Use Embeddings and Vector Search: Instead of relying on full-text generation, use embeddings to interpret and match content, reducing token-heavy interactions.

This strategic model approach aligns resource use with task complexity, preventing excessive token consumption.

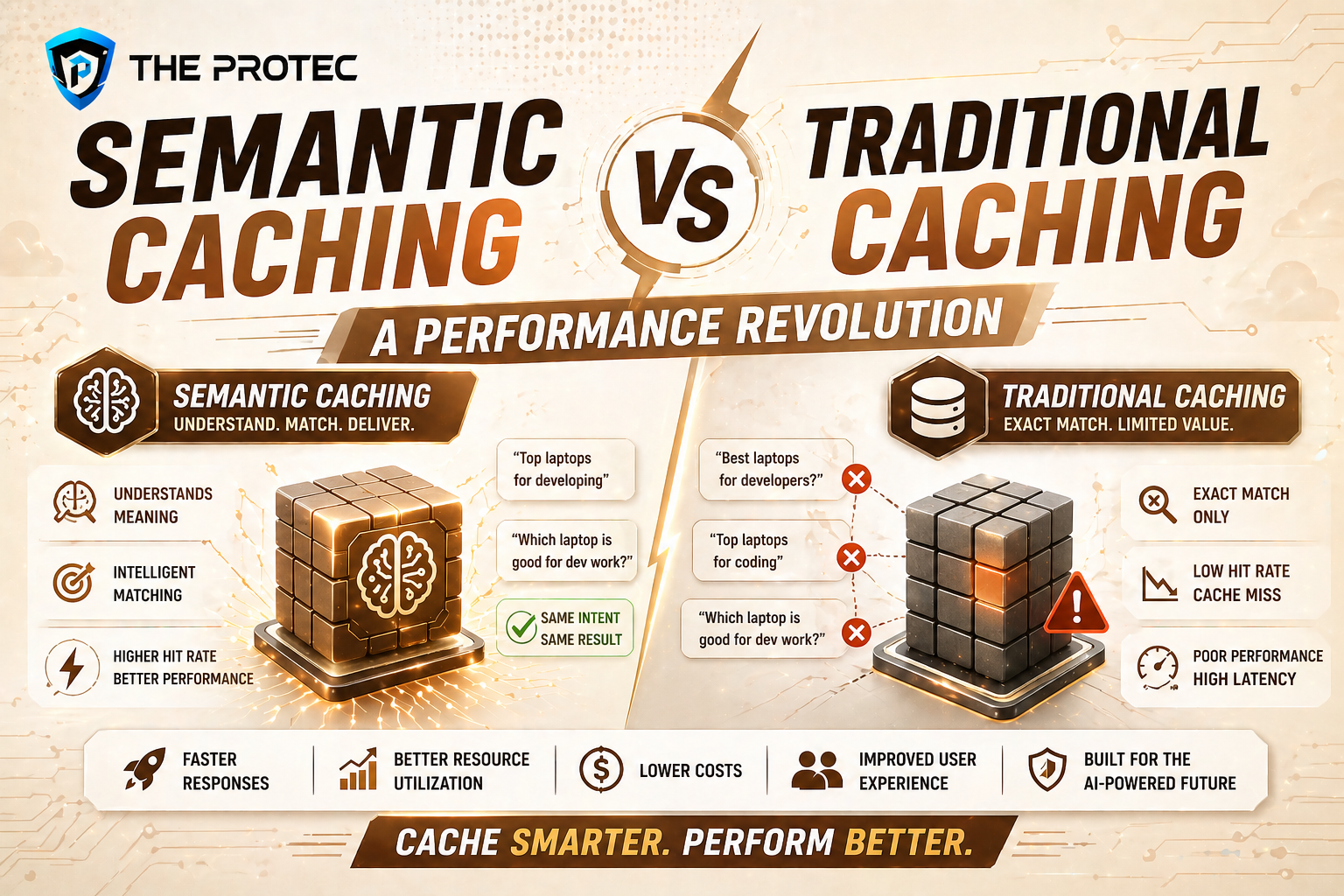

4. Implement Batch Processing and Caching

Operational strategies can further enhance token efficiency:

- Batch Requests: Group multiple inputs into single API calls where possible to save overhead tokens and reduce latency costs.

- Cache Frequent Queries: Store and reuse responses to common queries instead of regenerating outputs repeatedly.

- Cache Partial Outputs: In multi-turn conversations, reuse context snippets to minimize token retransmission.

Batching and caching increase throughput and dramatically cut repeated token usage, a wise move for production workloads.

5. Utilize Advanced Compression Techniques

Emerging tools and frameworks can compress prompts and outputs at various stages:

- Token Compression Libraries: Libraries using smart encoding can reduce token count before sending data to the API.

- Summarization Pipelines: Automatically distill input data into essential points to send as shorter prompts.

- Hybrid Approaches: Combine vector-based retrieval with concise prompts for cost-effective knowledge search.

These technical implementations often require some development effort but pay off by bringing substantial LLM cost savings.

6. Monitor, Analyze, and Automate Optimization

Continuous monitoring is indispensable for sustained cost optimization:

- Use Analytics Tools: Monitor token consumption patterns, cost spikes, and response characteristics.

- Implement Auto-Scaling Based on Usage: Dynamically adjust the complexity or frequency of API calls during peak times.

- Set Alerts for Anomalies: Detect unusual token usage early to prevent runaway costs.

Active management through data-driven insights allows fast adaptation and ongoing improvements to your token strategy.

FAQ

What is token usage in AI models and why does it matter?

Tokens are the basic units that AI language models process, representing chunks of words or characters. Since many AI services charge based on token volume, understanding and managing token usage is crucial to controlling costs.

Can reducing token usage negatively impact AI output quality?

While trimming tokens might risk losing context, careful prompt engineering and model tuning can maintain or even improve output quality despite lower token consumption.

Are there tools available to help monitor and optimize token costs?

Yes, many platforms offer analytics and monitoring dashboards, and there are third-party tools designed to track API usage and suggest optimizations to help reduce token expenditure smartly.

Conclusion

Optimizing AI model costs by reducing token usage is a multifaceted challenge demanding a blend of technical and operational strategies. By refining prompt design, managing output length, selecting the right models, and implementing batching and caching, organizations can significantly cut down API expenses without compromising AI response quality. Staying proactive with monitoring and leveraging emerging compression technologies further enhances cost-efficiency.

To deepen your understanding of cost-effective AI implementations, explore expert resources such as OpenAI’s guidelines on prompt best practices (OpenAI Prompt Design) and learn about vector databases at Pinecone Vector Database Overview.