What Are Mixture-of-Experts (MoE) Models? The Architecture Powering Modern AI

Mixture-of-Experts (MoE) models are a powerful AI architecture that boosts efficiency & performance. Discover how they work in models like Llama 3.

Discover how AWS, Azure, GCP, and emerging providers stack up in the battle for affordable cloud GPU pricing and cheap AI compute resources.

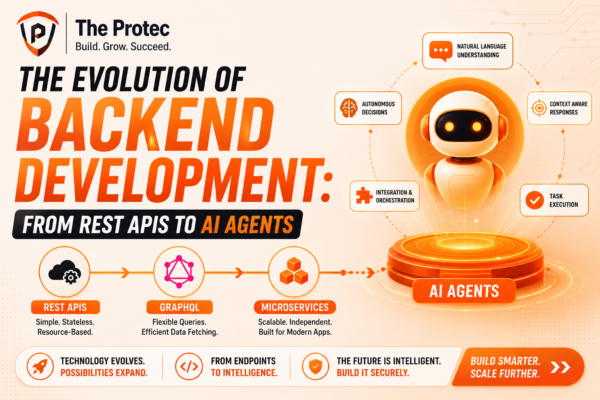

Explore how backend development is transforming through AI agents, shifting from traditional REST APIs to intelligent, autonomous backend systems shaping the future of APIs.

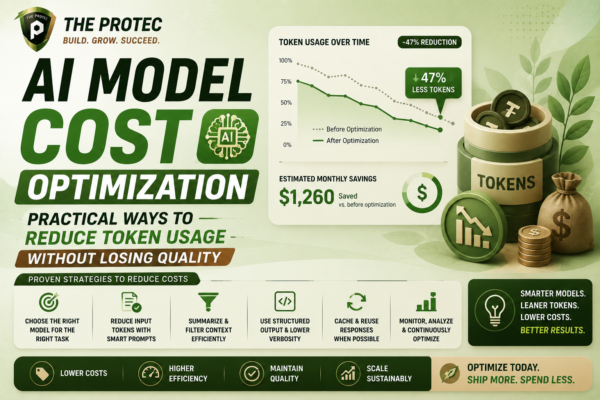

Discover effective strategies to optimize AI model costs by reducing token usage while maintaining output quality, maximizing your LLM investment.

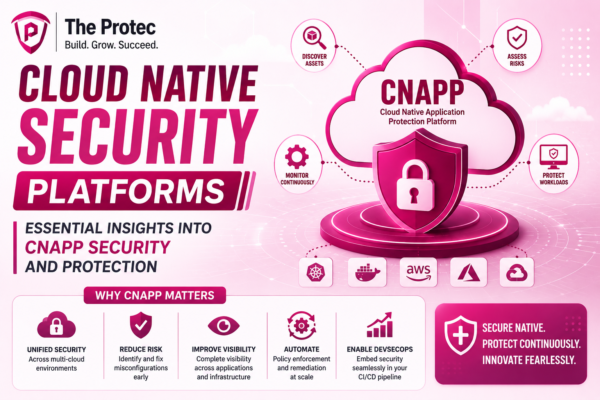

Discover the vital role of Cloud Native Security Platforms (CNAPP) in modern cloud security, exploring key tools and strategies to safeguard cloud environments effectively.

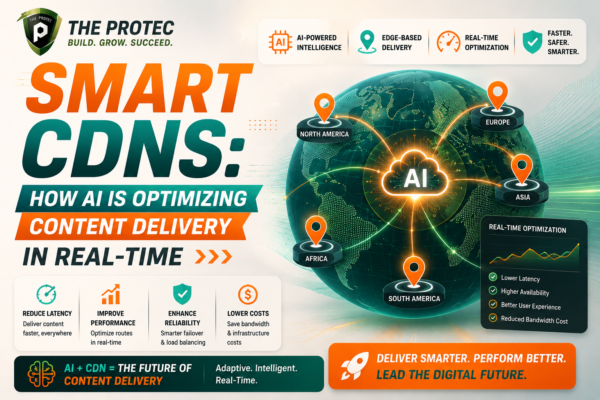

Discover how smart CDNs leverage AI-driven routing and caching to revolutionize content delivery optimization in real-time, ensuring faster, more reliable digital experiences.

Explore how AI UI design and AI UX tools are transforming design automation and examine whether these technologies can truly replace human designers.

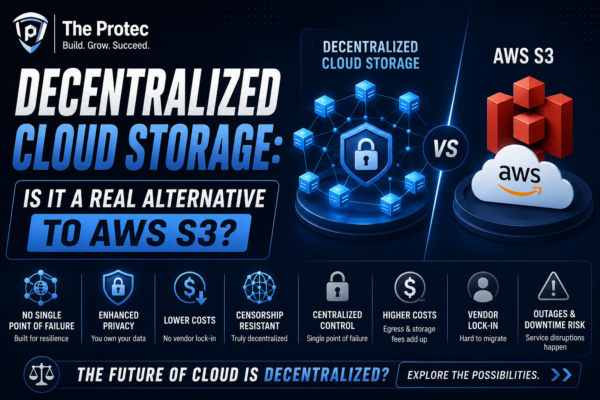

Explore how decentralized cloud storage compares to traditional AWS S3 solutions and whether Web3 storage can redefine data management.

Explore essential strategies to safeguard sensitive data in large language model applications and mitigate risks of data leakage in AI-driven environments.

Explore how AI coding IDEs are transforming software development and whether traditional code editors like VS Code can keep pace with this AI-driven evolution.

Discover how AI browser agents are transforming web automation by surpassing traditional tools like Selenium and Puppeteer with smarter, adaptive approaches and real use cases.